Lecture 3 - Part 1

Contents

- Introduction

- Probability Theory

- References

Introduction

Probability Theory

Before introducing the detection, estimation, and forecasting techniques in details, it is important to understand some key probabilistic concepts. The probability theory itself merits an entire course on its own. Therefore, it is challenging to cover all aspects as part of one chapter. Interested readers are referred to [1] for the detailed exposition of the topic.

Sample Space

Let us consider an experiment, e.g., tossing of a coin or dice, predicting the location of a ball in roulette etc. The outcome of such experiment is random and not predictable with certainty. Nevertheless, we do know that tossing a coin will result in head/tails, or in case of dice it will result in a number between 1-6. Consequently, while the outcome of the experiment is not known and its random, the set of all possible outcome is known.

Definition

The set of all possible outcomes of an experiment is known as the Sample Space and is commonly denoted by S.

Examples

- Coin Toss: In case of coin-toss example, Scoin={H,T}, i.e. the outcome is either heads(H) or tails(T).

- Student Exam: Consider a cohort of five students appearing in exam. Then the outcome is the position of the student after the test, i.e.

the outcome (2,3,4,1,5) for instance means that the student with ID 2 came first in the test and so on.

- Double Coin-toss: In case of flipping two coins, the sample space can be defined as all possible combinations as:

One can appreciate from this example that the Sdc is a product space of the sample space of single coin-tosses Sdc.

Event

Definition

Any subset of sample space E⊂S is known as event. In other words, an event (E) is a set of possible outcomes for the experiment.

If the outcome of the experiment is contained in set E, we say that event E has occurred. For instance, consider following examples:

- Rolling a dice: Consider rolling a dice, then an event can be rolling an even number, i.e.

- Double coin-toss: Imagine flipping two coins, then an event can be that at least one tail appears, i.e.,

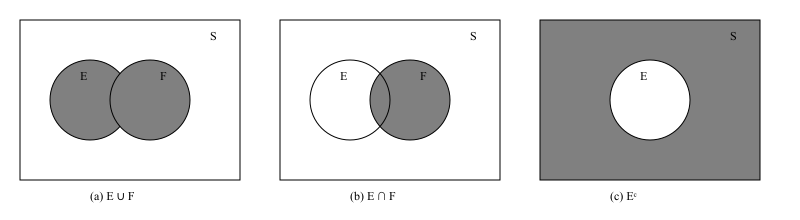

Let E and F be two events in S then E∪F will occur when either E or F occurs. The event E∪F is called a union event. Similarly, we can define an event EF which is an intersection of E and F, i.e., contains all outcomes which are feasible when both E and F occur. If E∩F is ∅ then E and F are mutually exclusive events. The set S\E is complementary set of E often denoted by Ec or Eˉ. Generally, the unions and intersections of the events can be defined as:

i∈I⋃Ei with I={1,2,...,N} i∈I⋂Ei with I={1,2,...,N}Some examples of these three concepts are as follows:

Example 1: Rolling a Die

The sample space is

S={1,2,3,4,5,6}.Let

- E={2,4,6} (event that the outcome is even),

- F={4,5,6} (event that the outcome is at least 4).

Then:

- Intersection:

is the event that the outcome is even and at least 4.

- Union:

is the event that the outcome is even or at least 4 (or both).

- Complement:

is the event that the outcome is odd.

Example 2: Drawing a Card

The sample space is

S={all 52 cards}.Let

- E={all hearts} (13 cards),

- F={all face cards (J, Q, K)} (12 cards).

Then:

- Intersection:

is the event that the card is a heart and a face card.

- Union:

is the event that the card is a heart or a face card (or both).

- Complement:

is the event that the card is not a face card.

Algebra of Events

The operations of forming unions, intersections, and complements of events obey certain rules similar to the rules of algebra.

We list a few of these important laws:

Commutative Laws

E∪F=F∪E EF=FEAssociative Laws

(E∪F)∪G=E∪(F∪G) (EF)G=E(FG)Distributive Laws

(E∪F)G=EG∪FG EF∪G=(E∪G)(F∪G)De Morgan's Laws in Probability

For two events E and F:

- Union complement:

- Intersection complement:

Explanation:

- (E∪F)c contains all outcomes not in E or F.

- (E∩F)c contains all outcomes not in both E and F.

Axioms of Probability

Probability can be defined in number of ways. One way of defining the probability is in terms of relative occurrence of the event:

P(E)=n→∞limnn(E),where P(E) is probability of the event, n(E) is the number of times the event occurs in n runs of the experiment. In other words, P(E) is defined as the limiting proportion of time that E occurs. It is thus a limiting frequency of E. This can be verified by a small demo here.

Dice Roller

Law of Large Numbers

Suppose we roll a fair six-sided die. The theoretical probability of getting a 3 is P(3)=61.

If we perform n rolls and observe k outcomes equal to 3, then the empirical probability is

As n→∞, P^(3) converges to P(3)=61.

Notice, that the definition here inherently assumes that the n(E)/n converges to a finite value for all repetitions of the experiment. It is difficult to prove this without making assumption on the convergence. Therefore, modern probability theory rather adopts an axiom based approach. In particular, for each event E⊂S, we assume that P(E) is the probability of the event which satisfies the following axioms:

Axiom 1

0≤P(E)≤1.The probability of an event E takes value between 0 and 1.

Axiom 2

P(S)=1The outcome of an experiment is a point in S with probability 1.

Axiom 3

For any sequence of events {E1,E2,...,EN} which are mutually exclusive, i.e., EiEj=∅ when i=j, then

P(i=1⋃NEi)=i=1∑NP(Ei)If events are mutually exclusive, then the chance of at least one happening is just the total of their separate chances.

Key Propositions in Probability Theory

1. Probability of the empty set

Statement:

P(∅)=0Proof:

The empty set ∅ is disjoint from S and S=∅∪S. By additivity,

Subtract P(S) from both sides and use P(S)=1:

0=P(∅).2. Boundedness

Statement:

0≤P(A)≤1Proof:

Non-negativity gives P(A)≥0. Also A⊆S, and S=A∪Ac with A and Ac disjoint, so by additivity

since P(Ac)≥0. Hence P(A)≤1. Combining yields 0≤P(A)≤1.

Experiment: Toss a fair coin.

- Sample space: S={H,T}

- Event: A={H}

Probability:

P(A)=21,0≤P(A)≤13. Complement Rule

Statement:

P(Ac)=1−P(A)Proof:

Because A and Ac are disjoint and A∪Ac=S,

Rearrange to get P(Ac)=1−P(A).

Experiment: Roll a fair die.

- Event A={2,4,6} (even)

- Complement Ac={1,3,5} (odd)

Probabilities:

P(A)=63=21,P(Ac)=63=21Check complement rule:

P(Ac)=1−P(A)=1−21=214. Sub-additivity (union bound)

Statement:

P(A∪B)≤P(A)+P(B)Proof:

Start from the inclusion–exclusion identity (proved below):

Since P(A∩B)≥0, subtracting it makes the right-hand side ≤P(A)+P(B).

Experiment: Draw a card from a deck of 52.

- Event A: heart (P(A)=13/52)

- Event B: king (P(B)=4/52)

Intersection: A∩B={King of Hearts},P(A∩B)=1/52

Union bound:

P(A∪B)≤P(A)+P(B)Actual probability:

P(A∪B)=P(A)+P(B)−P(A∩B)=5213+524−521=5216≤52175. Difference Rule

Statement:

P(A∖B)=P(A)−P(A∩B)Proof:

Partition A into disjoint sets A∖B and A∩B:

By additivity,

P(A)=P(A∖B)+P(A∩B).Rearrange to obtain the stated identity.

Experiment: Roll a die.

- Event A={1,2,3,4}

- Event B={3,4,5,6}

Then

A∖B={1,2},A∩B={3,4}Probabilities:

P(A)=64,P(A∩B)=62,P(A∖B)=62Check difference rule:

P(A∖B)=P(A)−P(A∩B)=64−62=626. Inclusion–Exclusion (two events)

Statement:

P(A∪B)=P(A)+P(B)−P(A∩B)Proof:

Write A∪B as a disjoint union:

with the three pieces pairwise disjoint. By additivity,

P(A∪B)=P(A∖B)+P(B∖A)+P(A∩B).But

P(A)=P(A∖B)+P(A∩B),P(B)=P(B∖A)+P(A∩B).Adding these and subtracting P(A∩B) yields

P(A)+P(B)−P(A∩B)=P(A∪B).Experiment: Draw a card from a deck of 52.

- Event A: heart (P(A)=13/52)

- Event B: king (P(B)=4/52)

- Intersection: king of hearts (P(A∩B)=1/52)

Check formula:

P(A∪B)=P(A)+P(B)−P(A∩B)=5213+524−521=52167. Inclusion–Exclusion (general form)

Statement:

For events A1,…,An,

Proof (sketch by induction):

Base n=1 is trivial. For n=2 we have the two-event formula. Assume it holds for n−1 events. Let

Un−1=⋃i=1n−1Ai. Then

Apply the induction hypothesis to P(Un−1). Note that

Un−1∩An=i=1⋃n−1(Ai∩An),so apply inclusion–exclusion again inside. Collecting terms produces the alternating sum for n sets.

Experiment: Roll a die.

- A={even}={2,4,6},P(A)=3/6

- B={prime}={2,3,5},P(B)=3/6

- C={≤3}={1,2,3},P(C)=3/6

Intersections:

A∩B={2},P=1/6;A∩C={2},P=1/6;B∩C={2,3},P=2/6;A∩B∩C={2},P=1/6Formula:

P(A∪B∪C)=P(A)+P(B)+P(C)−[P(A∩B)+P(A∩C)+P(B∩C)]+P(A∩B∩C)Substitute values:

P(A∪B∪C)=3/6+3/6+3/6−(1/6+1/6+2/6)+1/6=18. Monotonicity

Statement:

If A⊆B then

Proof:

When A⊆B, we can write B=A∪(B∖A). By additivity,

Since P(B∖A)≥0, it follows that P(B)≥P(A).

Experiment: Roll a die.

- A={1,2},P(A)=2/6

- B={1,2,3,4},P(B)=4/6

Since A⊆B:

P(A)≤P(B)⇒2/6≤4/6Conditional Probability

Suppose that we draw one card from a standard deck of 52 playing cards, and suppose that each of the 52 possible outcomes is equally likely to occur and hence has probability

521Suppose further that we are told that the card drawn is a heart. Then, given this information, what is the probability that the card is a face card (Jack, Queen, or King)?

To calculate this probability, we reason as follows: Given that the card is a heart, there can be at most 13 possible outcomes of our experiment, namely,

{♡A,♡2,♡3,…,♡K}.

Since each of these outcomes originally had the same probability of occurring, the outcomes should still have equal probabilities. That is, given that the card is a heart, the (conditional) probability of each of the outcomes is

131whereas the (conditional) probability of the other 39 points in the sample space is 0.

Hence, the desired probability will be

133since there are 3 favorable outcomes: {♡J,♡Q,♡K}.

If we let E and F denote, respectively, the event that the card is a face card and the event that the card is a heart, then the probability just obtained is called the conditional probability that E occurs given that F has occurred and is denoted by

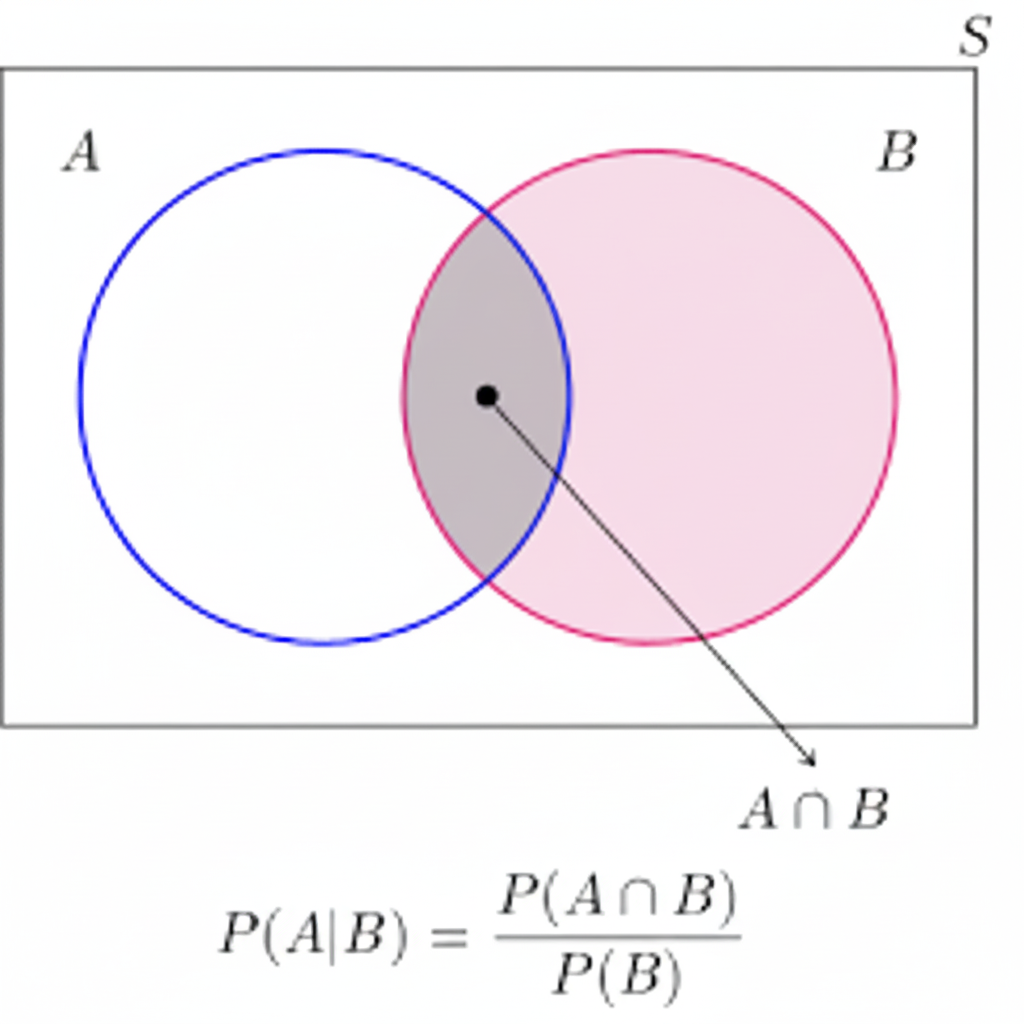

P(E∣F)Definition

A general formula for P(E∣F) that is valid for all events E and F is derived in the same manner: If the event F occurs, then, in > order for E to occur, it is necessary that the actual occurrence be a point both in E and in F; that is, it must be in E∩F. Now, > since we know that F has occurred, it follows that F becomes our new, or reduced, sample space; hence, the probability that the event E∩F occurs will equal the probability of E∩F relative to the probability of F. That is, we have the following definition:

P(E∣F)=P(F)P(E∩F), with P(F)>0.

Figure 2: Visual representation

Multiplication Rule in Probability

The multiplication rule expresses the probability of the intersection of events in terms of conditional probabilities. For n events A1,A2,…,An, the joint probability can be written as:

P(A1∩A2∩⋯∩An)=P(A1)P(A2∣A1)P(A3∣A1∩A2)…P(An∣A1∩A2∩⋯∩An−1)Or using product notation:

P(i=1⋂nAi)=i=1∏nP(Aij=1⋂i−1Aj)Bayes Theorem

Bayes' Theorem provides a systematic way to update the probability of an event A when new evidence B is observed.

It is based on the idea that the posterior probability P(A∣B) depends on:

- The prior probability P(A), representing our initial belief before seeing the evidence,

- The likelihood P(B∣A), the probability of observing the evidence if the event occurs, and

- The total probability of the evidence P(B), which normalizes the posterior to ensure all probabilities sum to 1.

Definition

Formally, Bayes' Theorem is:

P(A∣B)=P(B)P(B∣A)P(A)If the evidence B can occur under a set of mutually exclusive and exhaustive events {E1,E2,…,En}, then the Law of Total > Probability gives:

P(B)=i=1∑nP(B∣Ei)P(Ei)Combining these gives the full form of Bayes’ Theorem:

P(A∣B)=∑i=1nP(B∣Ei)P(Ei)P(B∣A)P(A)This formula shows that the posterior probability increases if the evidence B is more likely when A occurs (higher likelihood) or if > the prior probability P(A) is larger, but it is moderated by how likely the evidence is overall.

Example 1: Low prevalence, high test accuracy.

Consider a medical test for a rare condition:

- A = "person has the condition" with P(A)=0.01,

- E2 = "person does not have the condition" with P(E2)=0.99,

- P(B∣A)=0.95 (sensitivity),

- P(B∣E2)=0.05 (false positive rate).

Then the probability of having the condition given a positive test is:

P(A∣B)=0.95⋅0.01+0.05⋅0.990.95⋅0.01≈0.161Even though the test is positive, the posterior probability rises only to 16%, because the condition is rare and false positives are possible.

Counterexample: Higher prevalence, lower test accuracy.

Now suppose the condition is more common and the test is less accurate:

- P(A)=0.3,

- P(E2)=0.7,

- P(B∣A)=0.7,

- P(B∣E2)=0.2.

Then the posterior probability is:

P(A∣B)=0.7⋅0.3+0.2⋅0.70.7⋅0.3=0.350.21≈0.6A positive test now increases the probability from 30% to 60%, showing how higher prevalence and lower accuracy can still produce a strong posterior probability.

Key Takeaways:

- Bayes’ Theorem combines prior knowledge and new evidence to produce a rational update of probabilities.

- The denominator (total probability of the evidence) ensures the posterior is properly normalized.

- Posterior probabilities depend on prevalence, likelihood, and false positive/negative rates.

- It provides a quantitative framework for reasoning under uncertainty, widely used in many fields.

Practical Applications of Bayes’ Theorem

General Applications:

- Medical Diagnosis: Estimating the probability of disease given test results, as shown above.

- Risk Assessment: Calculating likelihoods of rare events (e.g., accidents, system failures).

- Decision Making: Updating beliefs based on new evidence in finance, law, and engineering.

- Fault Detection: In manufacturing or electronics, estimating the probability of failure given observed signals.

- Spam Detection: Filtering emails based on the probability that certain words indicate spam.

Some usage examples are as follows:

1. Spam Email Classification (Naive Bayes)

Suppose we want to classify an email as spam (S) or not spam (H) based on the presence of the word "discount" (W).

- Prior probability: P(S)=0.2, P(H)=0.8

- Likelihoods: P(W∣S)=0.7, P(W∣H)=0.1

Compute the probability that the email is spam given it contains "discount":

P(S∣W)=P(W∣S)P(S)+P(W∣H)P(H)P(W∣S)P(S)=0.7⋅0.2+0.1⋅0.80.7⋅0.2=0.14+0.080.14=0.220.14≈0.636Interpretation: The email is approximately 63.6% likely to be spam given it contains the word "discount".

2. Disease Prediction in ML Model (Binary Classification)

Suppose a binary classifier predicts a disease based on a symptom feature:

- Prior probability: P(Disease)=0.05, P(No Disease)=0.95

- Likelihoods: P(Symptom∣Disease)=0.9, P(Symptom∣No Disease)=0.1

Compute the posterior probability:

P(Disease∣Symptom)=0.9⋅0.05+0.1⋅0.950.9⋅0.05=0.045+0.0950.045=0.140.045≈0.321Interpretation: Even though the symptom is strongly associated with the disease, the posterior probability is only 32.1% due to the low prevalence.

3. Feature-Based Classification in Naive Bayes

Suppose a classifier uses two independent features F1 and F2:

- Prior: P(Class=1)=0.4, P(Class=0)=0.6

- Likelihoods: P(F1=1∣Class=1)=0.8, P(F2=1∣Class=1)=0.7

- Likelihoods for Class 0: P(F1=1∣Class=0)=0.3, P(F2=1∣Class=0)=0.2

If we observe F1=1 and F2=1, the posterior is:

P(Class=1∣F1=1,F2=1)=0.8⋅0.7⋅0.4+0.3⋅0.2⋅0.60.8⋅0.7⋅0.4=0.224+0.0360.224=0.260.224≈0.862Interpretation: Given both features are present, there is an 86.2% chance that the sample belongs to Class 1.

Example: IoT Sensor Fault Detection Using MTBF

Suppose we have a temperature sensor with a Mean Time Between Failures (MTBF) of 1000 hours. We want to determine the probability that the sensor has failed given that it shows an abnormal reading (A) after 200 hours of operation.

Step 1: Convert MTBF to failure probability

The failure rate per hour is approximately:

λ=MTBF1=10001=0.001 per hourAfter t=200 hours, the prior probability of failure (assuming exponential distribution) is:

P(F)=1−e−λt=1−e−0.001⋅200≈0.181So, P(W)=1−P(F)=0.819.

Step 2: Sensor behavior (likelihoods)

- If the sensor is faulty, probability of abnormal reading: P(A∣F)=0.9

- If the sensor is working, probability of false alarm: P(A∣W)=0.05

Step 3: Apply Bayes’ Theorem

P(F∣A)=P(A∣F)P(F)+P(A∣W)P(W)P(A∣F)P(F)Substitute values:

P(F∣A)=0.9⋅0.181+0.05⋅0.8190.9⋅0.181=0.1629+0.040950.1629=0.203850.1629≈0.799Interpretation: Given an abnormal reading after 200 hours of operation, there is approximately an 80% chance that the sensor has failed.

Insight: By combining MTBF-based prior probability and observed evidence, Bayes’ Theorem helps in predictive maintenance to identify likely sensor failures early and reduce system downtime.

Random Variable

Generally, when an experiment is performed, we are interested in functions of the outcome rather than outcome itself. For instance, we are interested in tossing two dice, if the sum of the faces adds up to 6 and not that much concerned about individual face values for each flip. Essentially, two flips can yield any of the combinations in set S^= [(1,5), (2,4), (3,3), (4,2), (5,1)]. These functions which map the outcomes in the sample space to real value are known as Random variables. Formally,

Definition

A random variable (RV) X is a function mapping outcomes to real values:

X:S→R

Discrete RV takes countable values (in Z) while the continuous RV can take values in R.

Probability Mass Function (PMF)

For a discrete random variable:

pX(x)=P(X=x)with

x∑pX(x)=1Example

Suppose that our experiment consists of tossing 3 fair coins. If we let Y denote the number of heads that appear, then Y is a random variable taking on one of the values 0,1,2, and 3 with respective probabilities

P{Y=0}=P{(T,T,T)}=81,P{Y=1}=P{(T,T,H),(T,H,T),(H,T,T)}=83,P{Y=2}=P{(T,H,H),(H,T,H),(H,H,T)}=83,P{Y=3}=P{(H,H,H)}=81.Probability Density Function (PDF)

We say that X is a continuous random variable if there exists with

fX(x)≥0,∫−∞∞fX(x)dx=1so that we can define the probability of some set B∈R to be

P(X∈B)=∫BfX(x)dx,In other words, probability of RV X taking some value x∈[a,b] is given by:

pX(x)=∫abfX(x)dx,pX(x)=FX(b)−FX(a),FX(z)=∫−infzfX(x)dx,Cumulative Distribution Function (CDF)

For both discrete and continuous cases:

FX(x)=P(X≤x)4. Expectation and Variance

Expectation (Mean):

E[X]={∑xxpX(x)∫−∞∞xfX(x)dxdiscretecontinuousVariance:

Var(X)=E[(X−E[X])2]Inequalities of Expected Value

Expected value inequalities provide upper bounds on the probability of a random variable's value deviating from its expected value. They are used when the exact probability distribution is unknown, offering a way to make probabilistic statements with limited information.

1. Markov's Inequality

Markov's inequality is a fundamental tool that applies to any non-negative random variable. It gives an upper bound on the probability that the random variable is greater than or equal to some positive constant.

Statement: For a non-negative random variable X and a positive constant a>0, the inequality is:

P(X≥a)≤aE[X]Analogy: If you know the average length of a movie is 120 minutes (E[X]=120), you can use this inequality to say that the probability of randomly picking a movie that is 240 minutes long or longer is no more than 120/240=0.5.

2. Chebyshev's Inequality

Chebyshev's inequality is a more powerful version of Markov's that uses the variance of the random variable. It provides a tighter bound on the probability that a random variable deviates from its mean by more than a certain amount. It applies to any random variable with a finite mean and variance.

Statement: For a random variable X with finite expected value μ=E[X] and finite non-zero variance σ2=Var(X), the inequality is:

P(∣X−μ∣≥k)≤k2σ2Analogy: If you're measuring the height of students and you know the average height is 5'9" with a standard deviation of 2 inches, Chebyshev's inequality guarantees that no more than 1/4 of the students are either shorter than 5'5" or taller than 6'1" (i.e., more than 2 standard deviations from the mean).

3. Jensen's Inequality

Jensen's inequality is different from the others as it doesn't directly bound probabilities. Instead, it relates the expected value of a convex or concave function to the function of the expected value. It's a foundational concept in optimization and information theory.

Statement: For a convex function ϕ:

E[ϕ(X)]≥ϕ(E[X])For a concave function ϕ:

E[ϕ(X)]≤ϕ(E[X])Analogy: Imagine a game where your winnings are a convex function of your dice roll. Jensen's inequality tells you that your average winnings will be greater than or equal to what you'd win if the die always landed on its average value (3.5). This shows that variability can be beneficial for convex functions.

References

[1] Ross, "Signal Processing for Communications", EPFL Press, 2008.