Lecture 1 - Part 1

Contents

- Introduction to IoT and Edge Computing

- Application Deriving Edge and IoT Revolution

- IoT

- Digital Twins

- 5G, 6G and Internet-of-Senses

- Internet-of-Senses

- References

Introduction to IoT and Edge Computing

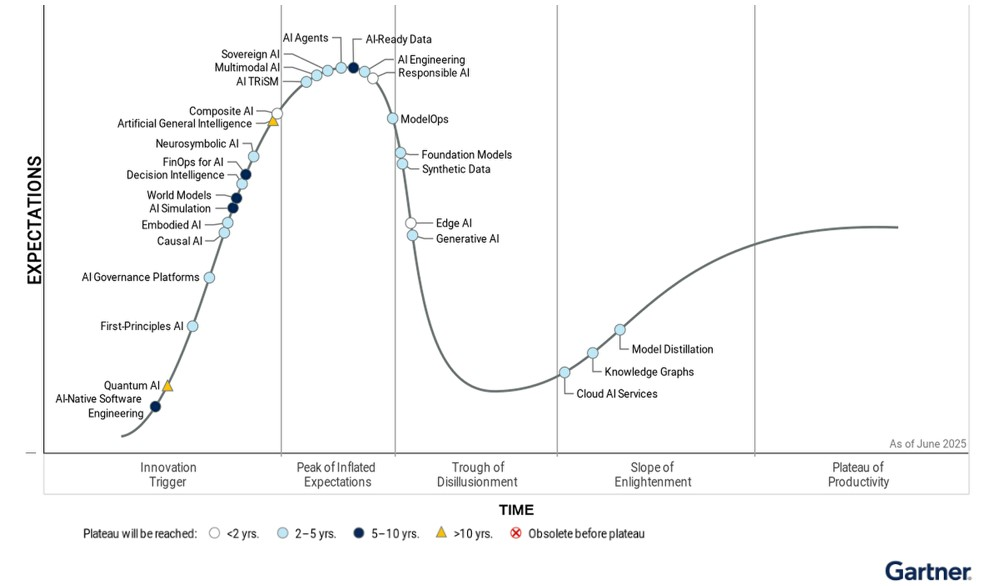

Internet-of-Things (IoT) and Artificial Intelligence (AI)

Artificial intelligence (AI) is fundamentally a collection of digital technologies that derive cognition (learning, planning and orientation) through perception of the environment, therefore providing capability to make the right decisions at the right time. Machine Learning (ML) is an algorithmic tool-set to enable AI, in this context cognition is implemented as a learning process based on experience in relation to a certain task with an associated performance measure. The experience is often derived from the data collected from the perception of the environment [1].

The symbiosis of AI and Internet-of-Things (IoT) is natural. IoT provides a perception layer for many smart-city applications and ML augments sensing capabilities by deriving intelligence from data collected through this perception layer. A typical IoT device will have a micro-controller unit (MCU) potentially with an integrated low-power radio transceiver for wireless connectivity. Each MCU is furnished with a limited amount of memory and is typically designed to operate on a coin cell for several years. These low-cost IoT devices have a small footprint as often designed to be less obtrusive. In current IoT architecture typically data from the end-nodes/IoT devices is transmitted and aggregated at the gateways. The transmission of data is supported by a variety of connectivity technologies ranging from Unlicensed WiFi and Low-Power Wide-Area-Networking (LPWAN) technologies such as LoRa and Sigfox, as well as Licensed Cellular radio e.g. LTE for Machines (LTE-M) and Narrow Band (NB) IoT. The gateways are then connected to Cloud platforms via a broadband internet connection and allow storage, processing and inference on the aggregated sensor data.

A Journey into Internet of Things (IoT)

Computing at the Edge

1. The Big Picture: IoT

Connecting Everything

Billions of devices connected over the internet, creating an unprecedented network of smart objects that can sense, communicate, and act autonomously.

Key Challenges with Cloud Based Architecture

There are several key challenges which demand departure from cloud-centric architecture. To list few:

Power Consumption for Wireless Connectivity:

Most IoT devices are battery-powered allowing tether-less connectivity in scenarios where: i. fixed power supply does not exist; ii. the power source is not co-located with point-of-interests (PoI) for monitoring or actuation; iii. plugging into mains is costly and outweighs the device utility; and iv. mains power limits the mobility of the object in which IoT sensors are augmented. However, reliance on battery power means that operational lifetime is limited and power consumption is of paramount importance.

Typically local computation is several-fold less power-hungry than transmission over wireless channel[2]. Moreover, while sensors collect a huge amount of data, from an application perspective it is the inference that is of importance. This inadvertently requires compute efficient method of localized ML which also increases bandwidth efficiency.

Latency:

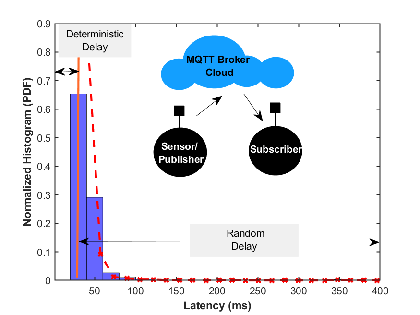

Fig 1: Latency Example

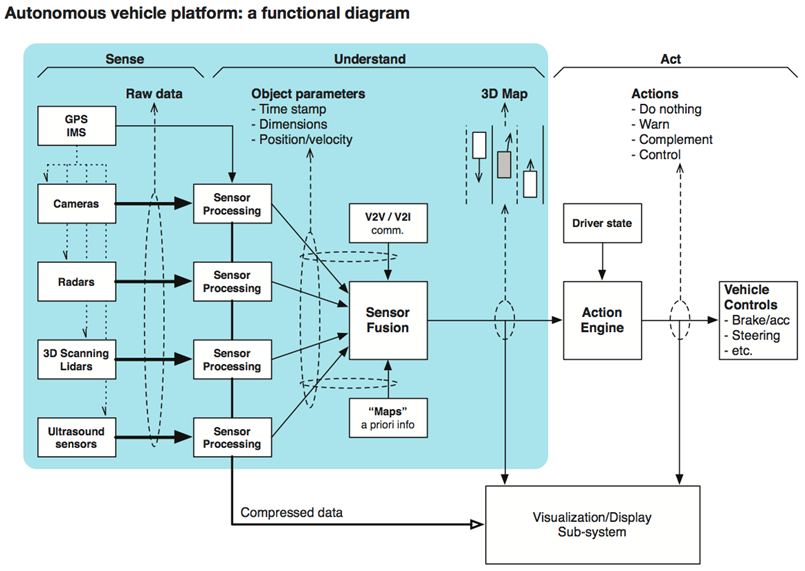

The current architecture not only incurs power cost to facilitate wireless connectivity but also introduces non-deterministic delay. Fig. 1 shows the probability density function (PDF) of latency for the Message Queuing Telemetry Transport (MQTT) packet published by an IoT device e.g. temperature sensor for a subscriber such as a remote monitoring tablet. Each packet is 140 bytes in size and latency is calculated at the application layer. It is obvious that there is a minimum fixed latency (which itself is a function of the load on the server, time of day, etc.) and a power-law distribution for the random delay. This scenario does not include the time taken to perform ML to derive inference. However, it shows that the round trip latency of cloud-centric solutions is unpredictable. Therefore, it is highly desirable to move the inference capabilities to the edge. In particular, when sensors derive actuation e.g. in autonomous vehicles or robotics applications, it is highly desirable to minimise the latency.

Privacy:

Another disadvantage of cloud-centric architecture is that raw data from the sensor has to traverse to the cloud via gateway and the internet. Any compromise in security leads to privacy violations. Also, there is no transparency on how data is being used and which applications are authorized to use it. Moving inference closer to the user, i.e. on the edge can provision an architecture whereby raw data is never transmitted to the cloud. Only inferences driven on the raw data are transmitted to the cloud and stored for application-specific actions. In enterprise setup, the data sovereignty and business insights also need to be protected. This also warrants, processing data closed to applications.

Connectivity:

Lastly, in the current architecture gateways need to be connected to the internet. Provisioning such connectivity incurs both capital expenditure (CAPEX) and operational expenditure (OPEX) with management overheads associated with maintaining infrastructure. Local inference capabilities reduce the reliance on connectivity, i.e. provision of services in areas where internet connectivity is intermittent or even does not exist at all.

Application Deriving Edge and IoT Revolution

Industry 4.0 and Beyond

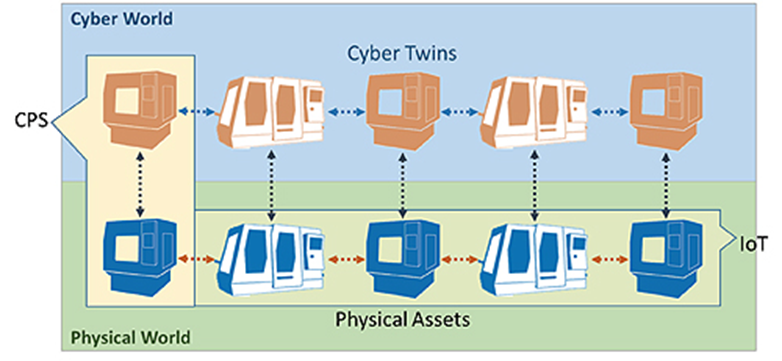

Fig 2: Industry 4.0 courtesy IEEE .

The Industry 4.0 paradigm aims to integrate advanced manufacturing techniques with cyber physical systems (CPS) to create an agile digital manufacturing ecosystem. The main goal is to instrument production processes by embedding sensors, actuators and other control devices which autonomously communicate with each other throughout the value-chain [3].

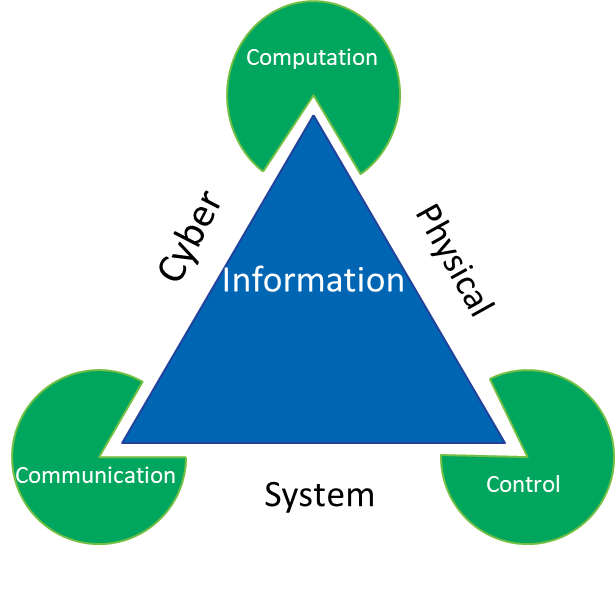

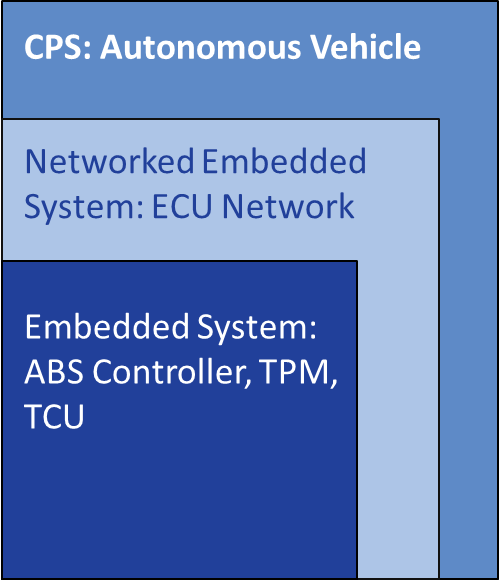

Cyber Physical Systems (CPS)

Definition

Cyber physical systems (CPS) integrate the physical (real world) and digital (virtual world) processes. Sensors and actuators link the digital and physical worlds, enabling monitoring, control, and optimization.

CPS employs digital processes to implement:

- Communication

- Control

- Computation

These are tightly integrated with physical systems.

Key Characteristics of CPS

- Embedded computation: Tight integration of processors with physical processes

- Feedback loops: Continuous monitoring and actuation

- Networked systems: Rely on communication protocols (wired/wireless)

- Real-time requirements: Timely responses are critical

- Scalability & resilience: Must adapt to complex, large-scale environments

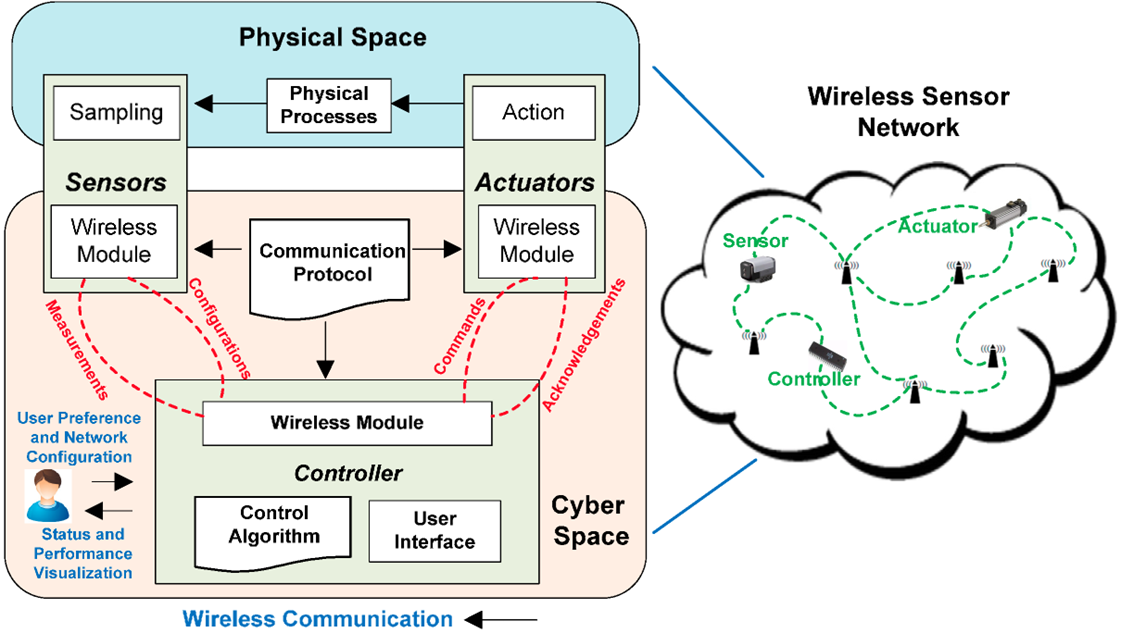

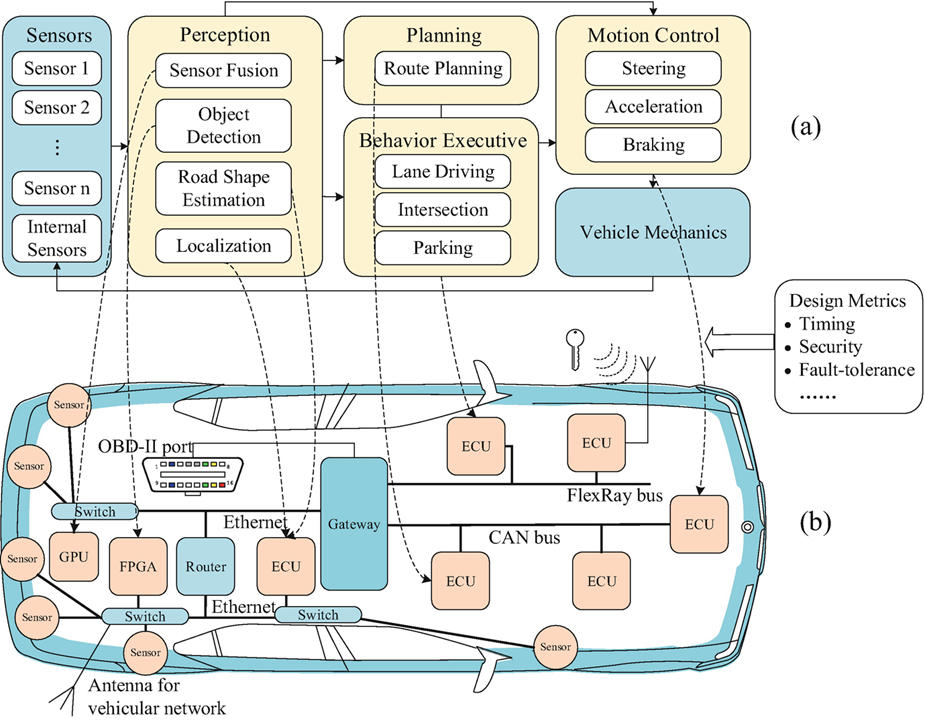

CPS Architecture [4]

Architecture of a CPS

Layers:

- Perception Layer: The perception layer is formed of IoT devices which collect data for both physical processes and the operational environment.

- Network Layer: Communication technologies like 5G, Wi-Fi, Zigbee, LoRa, etc. which allow transmission of data.

- Computation/Control Layer : Embedded processors alongside cloud/edge computing provide platform for implementing control algorithms and capability to deploy AI models.

- Application Layer: These are the end user-service e.g. smart transportation, healthcare, robotics.

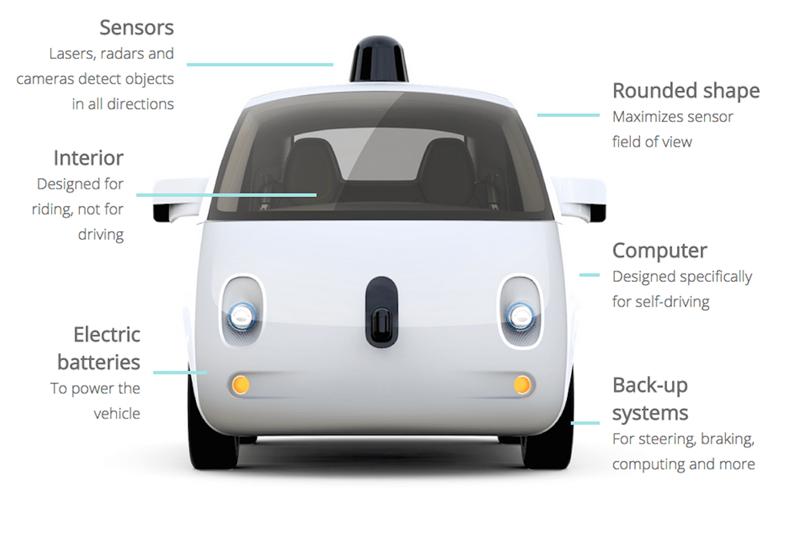

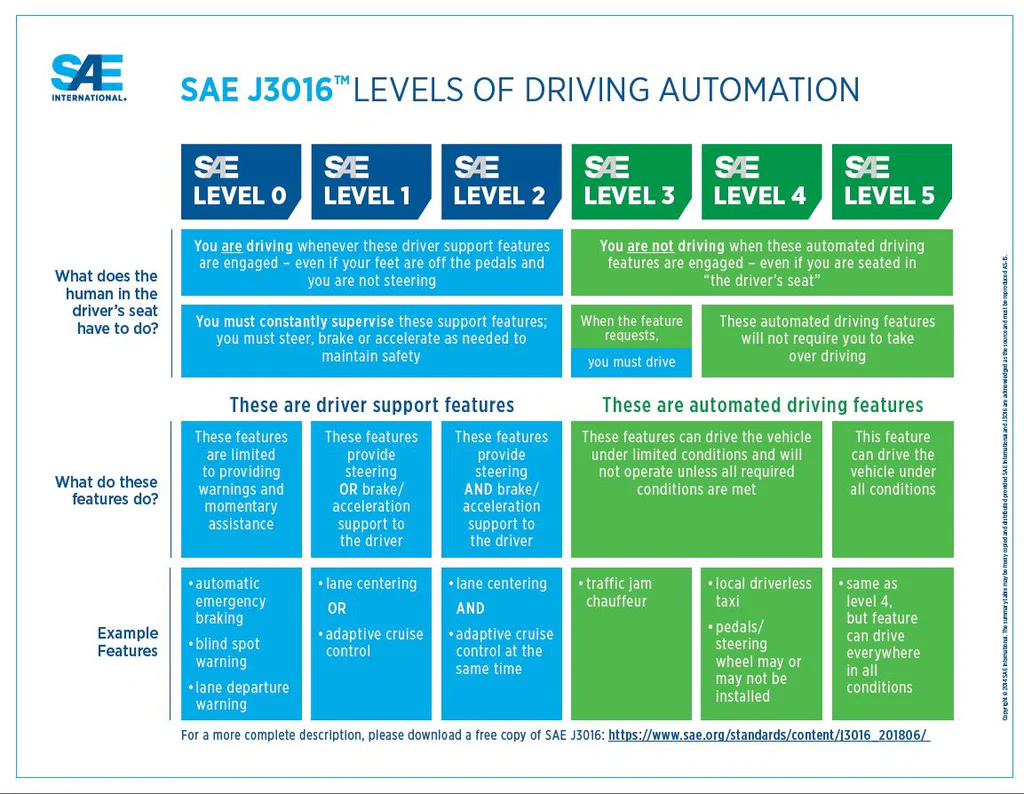

CPS Model for Vehicle (Source)

CPS Model for Vehicle [5]

Enabling Technologies

- IoT (Internet of Things)

- Wireless Communication (5G/6G, URLLC)

- Embedded Systems

- Artificial Intelligence & Machine Learning

- Cloud/Edge/Fog Computing

- Digital Twins

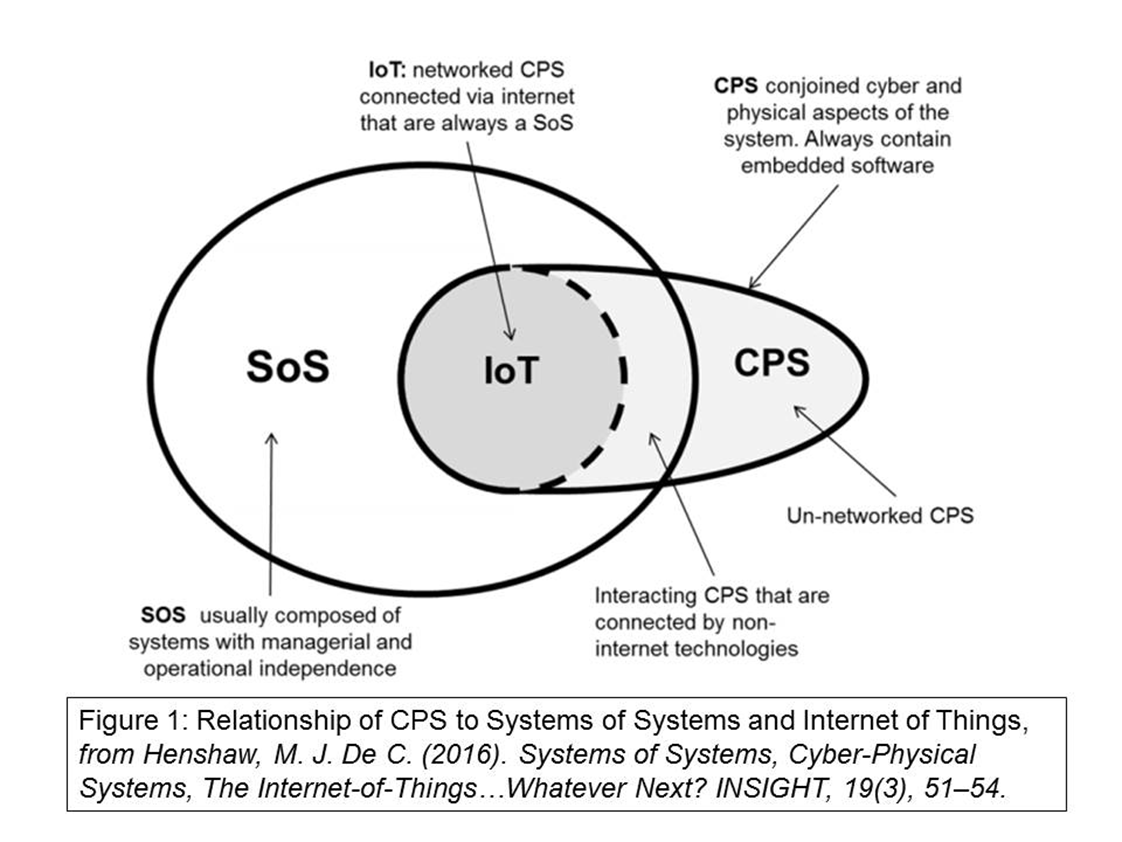

IoT

Definition

The Internet of Things (IoT) refers to a network of physical objects embedded with sensors, software, and connectivity, enabling them to collect, exchange, and act on data autonomously or with minimal human intervention.

The term was first coined by Kevin Ashton in 1999, who envisioned connecting everyday objects to the internet to track, monitor, and optimize processes in real time, such as supply chains and industrial systems. IoT can also be understood through the lens of Weaver’s principle from communication theory, which emphasizes that effective communication involves not only the accurate transmission of data (technical problem) but also correct interpretation of information (semantic problem) and meaningful action based on that data (effectiveness problem). In essence, IoT embodies Ashton’s vision of connected “smart” objects while applying Weaver’s framework to ensure that the collected data is accurately communicated, correctly understood, and effectively used to drive decisions and actions.

INTERNET of THINGS

Different Services, Different Technologies

Different Meanings for Everyone

Sensing

Processing

Connectivity

Cloud & Edge

Technology Innovations

Miniaturization & advances in packaging technologies

Advances in flash memory and storage

New class of powerful but low-cost & low-power MCUs

Cloud-based services and edge computing

5G and advanced networking protocols

AI/ML optimization for edge devices

Advanced sensor fusion techniques

Blockchain for IoT security

Explore IoT Layers

Digital Twins

Definition

The information collected from the Industrial IoT devices can be utilized to create digital replicas of the physical processes, machines and operating conditions. These digital replicas are more commonly known as digital twins. A digital twin of a physical entity can be placed in a virtual production environment, allowing prediction of the future state of system given the current trajectory of evolution, which is based on the sensed data. This allows for proactive optimization of the production processes and reduction in the downtime for the equipment. In summary, the key benefits of digital twinning include: i) reduction in down-time through proactive repairs and agile reconfiguration of manufacturing cells; ii) reduction in ramp-up time for new manufacturing processes; iii) decrease in cycle-time through online optimization

Besides digital twining, other key enablers for Industry 4.0 include collaborative robotics (also known as cobotics) and tele-robotics. The former is mainly focused on robot-robot and human-robot collaborative assembly on a production line, while the latter is geared towards amplification of human capacity (e.g. force or scale of operation) or operation in hard to access areas. Recently, the cloud robotics paradigm whereby the brain of the robotic system can be implemented in the cloud has gained significant popularity and is envisioned as a key proponent of both cobotics and tele-robotic systems.

The above-mentioned applications vary significantly in terms of the requirements of connectivity ranging from low data rate, reliable connections for IIoT sensor readings to high bandwidth, low-latency connections for immersive virtual/ augmented reality (VR/AR) digital twins. While 5G wireless technologies are seen as a key enabler for realizing ultra- reliable low-latency communication (URLLC), the current Release 15 which is commercially being deployed does not include such features. In summary, an extensive mapping of the desired features for these Industry 4.0 applications leads to three key requirements for the communication networks, i.e., reliability, resilience, and security.

1. What is Reliability?

Definition

Reliability: The probability that a system or component performs its intended function without failure for a specified period of time. In Discrete Time (DT), we assume time progresses in steps (e.g., hours, days, cycles).

2. Key Functions in Reliability Theory

Reliability Function:

R(k)=P(T>k)where T = lifetime (in discrete steps), k = number of steps elapsed.The probability the system survives beyond step k.

Probability Mass Function (PMF) of Lifetime:

f(k)=P(T=k)This is the probability that failure occurs exactly at step k.

Cumulative Distribution Function (CDF):

F(k)=P(T≤k)=i=0∑kf(i)It is the probability the system fails by or before step k.

Hazard Function (failure rate at step k):

h(k)=R(k−1)f(k)The Probability of failure at step k given survival up to step k−1.

This models components with memoryless behavior (with constant failure risk each hour).

4. Systems Reliability in DT

- Series System (fails if any component fails) Rseries(k)=∏i=1nRi(k)

- Parallel System (fails only if all components fail) Rparallel(k)=1−∏i=1n(1−Ri(k))

5. Practical Use Cases

- Manufacturing: Predicting downtime in machines.

- Communications: Packet delivery success probability in networks.

- Cyber-Physical Systems: Ensuring system survivability in DT steps.

Problem 1 — Two Smoke Detectors in Parallel

In a smart home, two smoke detectors are installed in parallel (the system fails only if both fail).

The component reliabilities are:

R1(k)=(0.95)k,R2(k)=(0.97)k

Find the system reliability after k=5 days.

Solution

For a parallel system with two components:

Rparallel(k)=1−(1−R1(k))(1−R2(k))

Compute component reliabilities at (k=5):

R1(5)=(0.95)5≈0.774

R2(5)=(0.97)5≈0.859

Now substitute into the system formula:

Rparallel(5)=1−(1−0.774)(1−0.859)

Rparallel(5)≈0.968

Interpretation

Parallel redundancy improves reliability: the two detectors together have about 96.8% chance of functioning after 5 days, compared to ~77% and ~86% individually.

Problem 2 — 2-out-of-3 Edge Server Cluster

A cluster of 3 edge servers supports IoT devices using 2-out-of-3 redundancy (system works if at least 2 servers survive).

Each server has reliability:

R(k)=(0.99)k

Compute the system reliability at k=50 cycles.

Solution

- Single-server reliability:

Rsingle=(0.99)50≈0.605

- For a 2-out-of-3 system, reliability is:

R2-of-3=(23)R2(1−R)+(33)R3

Simplify:

R2-of-3=3R2(1−R)+R3

- Substituting R=0.605:

R2-of-3≈3(0.6052)(0.395)+(0.6053)

R2-of-3≈0.655

Interpretation

Each server alone has ~60.5% chance to survive 50 cycles.

With 2-out-of-3 redundancy, the system reliability improves to about 65.5%.

Digital Twin - Industrial Equipment Monitor

Real-time monitoring and predictive analytics for manufacturing equipment

Temperature & Vibration Trends

Power Consumption & Motor Speed

Equipment Status

Recent Alerts

Digital Twin Concepts Demonstrated

5G, 6G and Internet-of-Senses

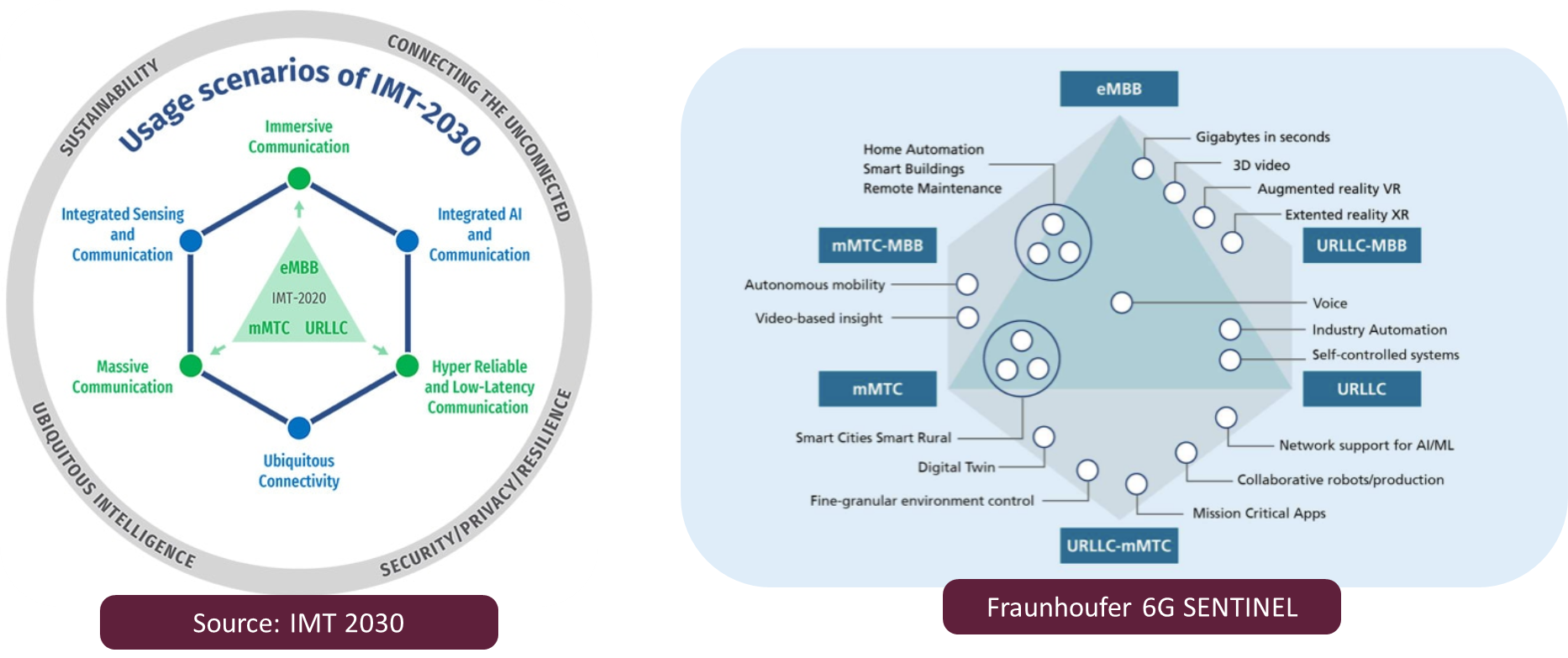

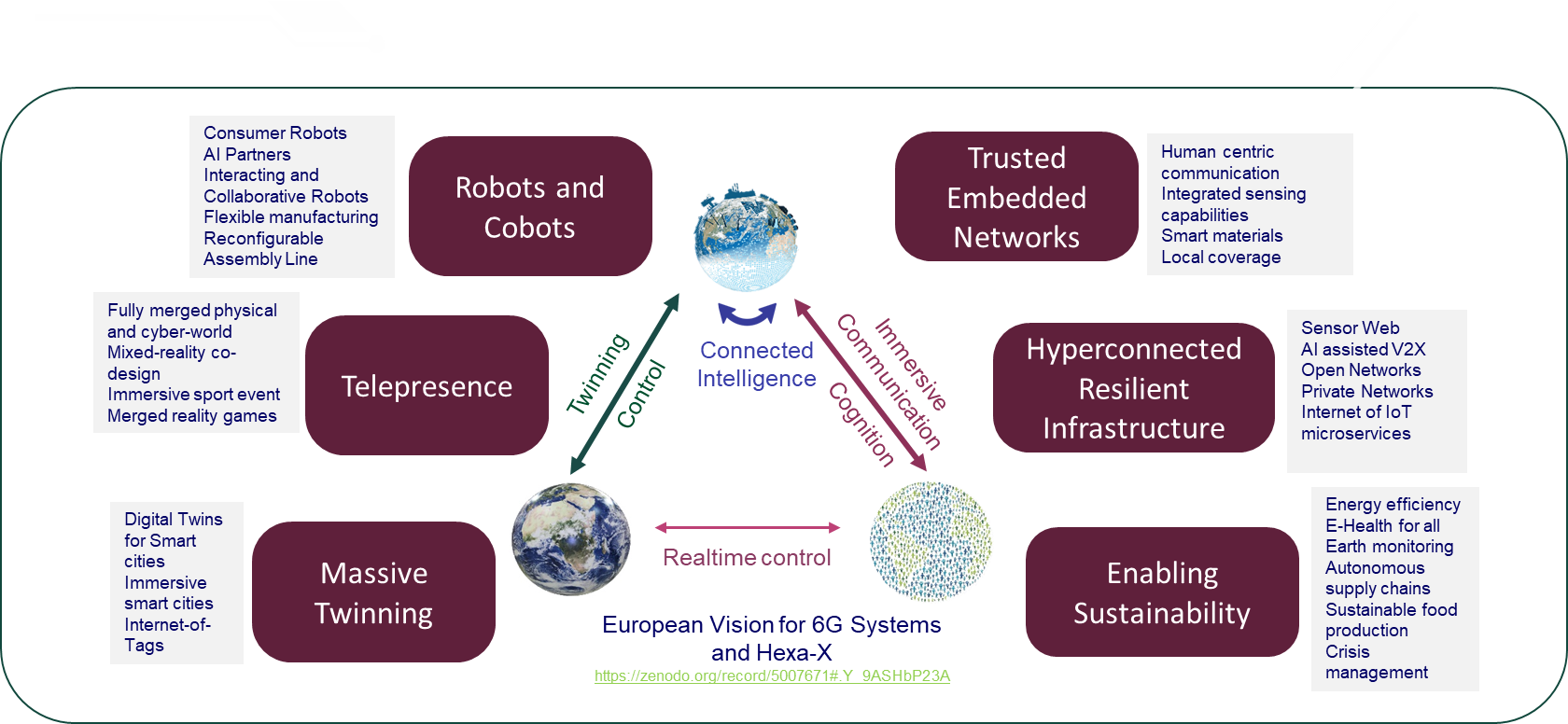

Previous generations of cellular connectivity focused on offering triple play (voice, video and data). 5G promised departure from this allowing massive connection between machines, paving way for machine-to-machine communication. 5G was all about enabling three service classes, i.e.,

- Ultra-reliable and Low Latency Communication (URLLC),

- Massive Machine Type Communication (mmTC),

- Enhanced Mobile Broadband (eMMB),

These encompass variety of use-cases, i.e. from IIoT to Robotics etc. Evolving the promises of the 5G, 6G aims to advance the vision by introducing three new dimensions:

- Integration of AI

- Integration of Sensing and Communication (ISAC)

- Ubiquitous Connectivity through Non-Terrestrial Networks.

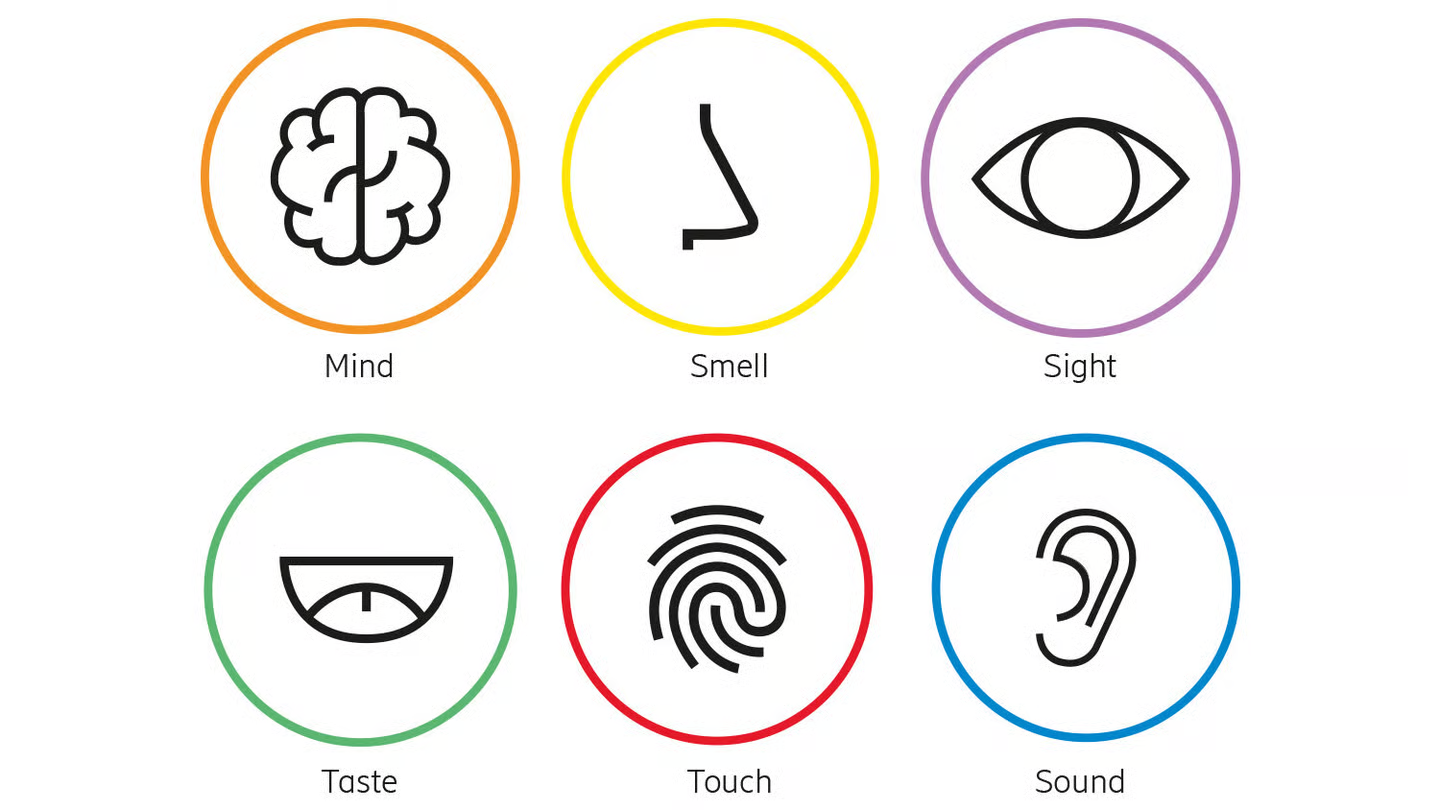

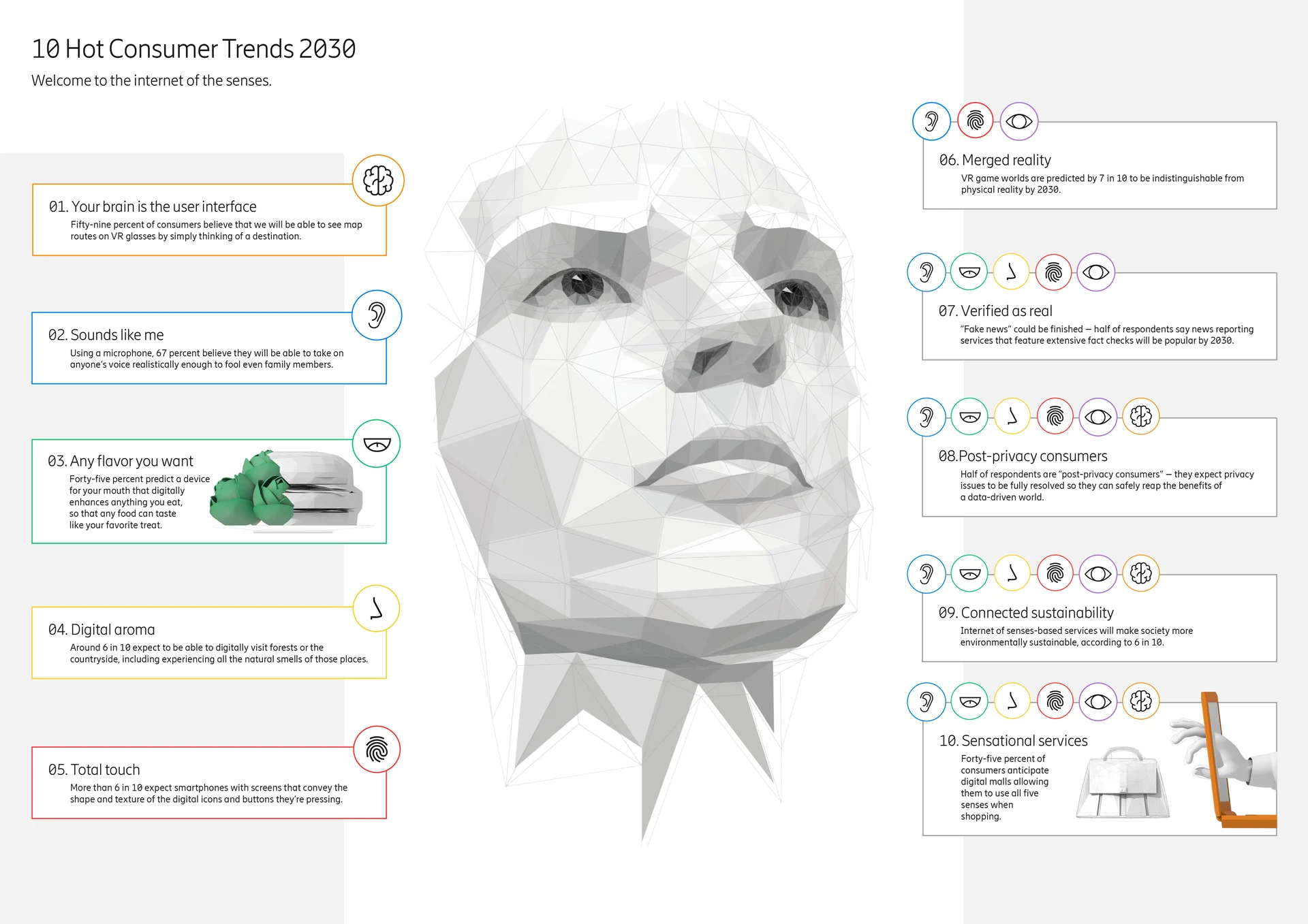

Internet-of-Senses

So far, we have been able to communicate only one of the senses (sound). True teleportation is only possible through being able to fuse all senses in the immersive environment. Internet-of-Senses (IoS) vision is based around capability to sense, encode, and transmit all senses. This is only possible by convergence of IoT, Edge compute, XR and Digital Twins technology. Internet-of-Senses (IoS) is not a Sci-fi vision anymore, with the advent of GenAI it seems realistically achievable in next few years. Several advances have been made in sensing technologies as well due to new materials.

Ericsson: Read this article for details: https://www.ericsson.com/en/reports-and-papers/consumerlab/reports/10-hot-consumer-trends-2030

In all cases latency plays a crucial role in determining the performance.

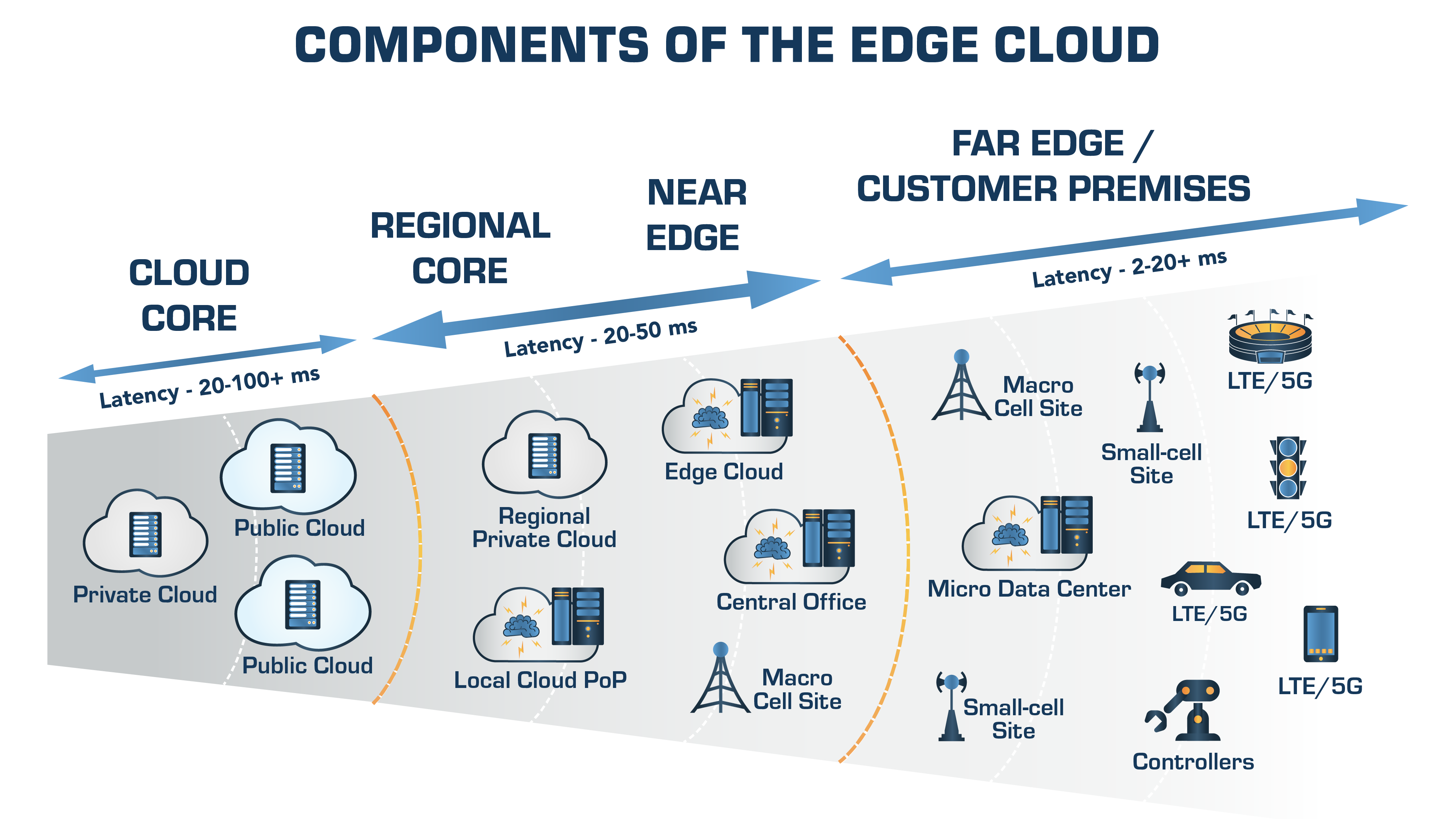

The latency is also function of where actually inferencing happens. The term edge-computing is rather defined bit vaguely. It is beneficial to consider what we call deep-edge (often user device), far-edge (e.g. gateway), as well as city level local compute deployment (near-edge) as complement to cloud-based services provided by the data-center.

Source: Here