Lecture 4 - Part 1

Contents

Introduction to Detection

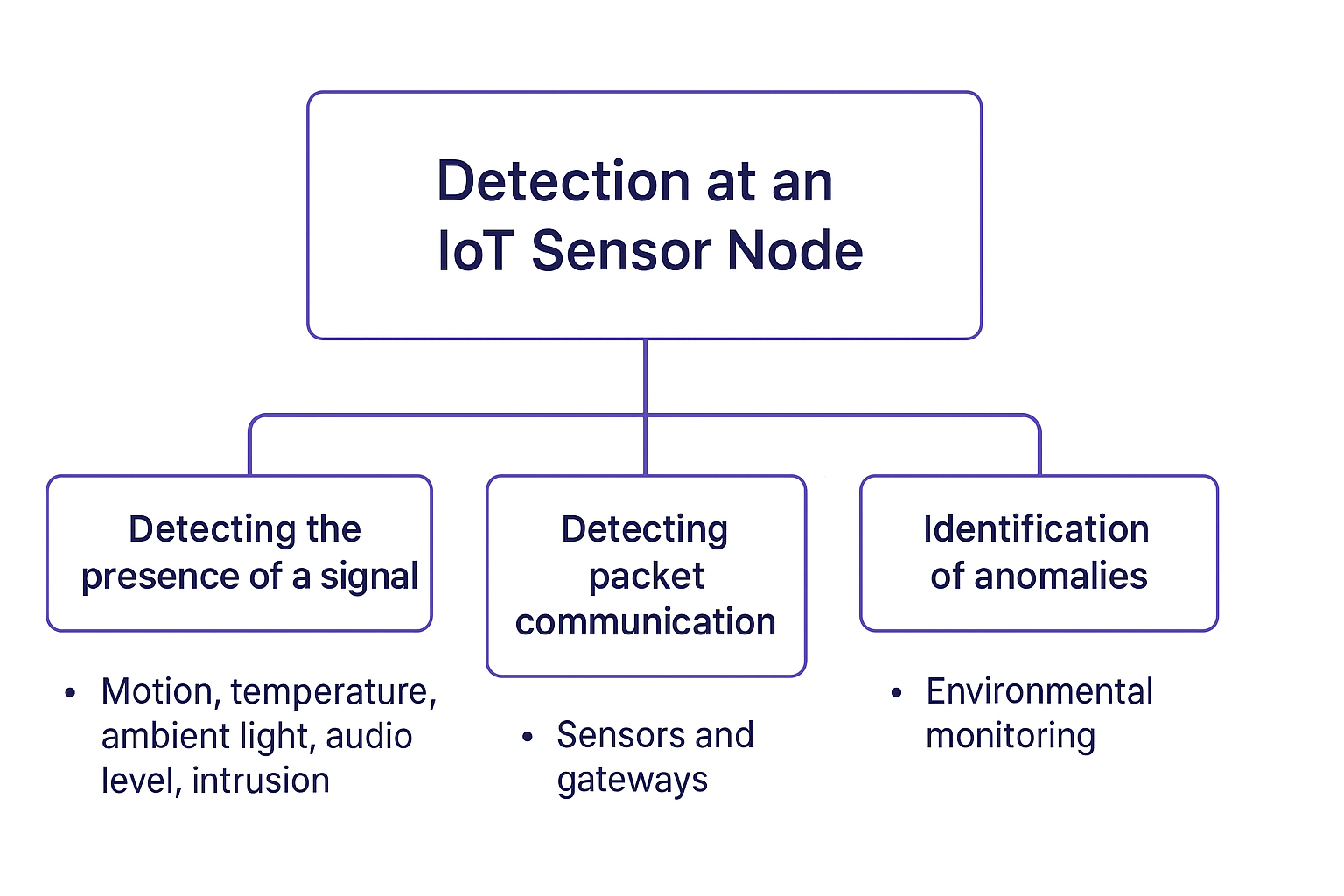

One of the key functionality at the IoT sensor node is to detect an event. The sensing tasks range from:

- Detecting the motion, change in temperature, ambient light, intrusion or change in audio level. All these tasks are detecting the absence or presence of certain signal.

- Detecting the packets sent to communicate these readings between a sensor and the gateway. In other words, we often need to detect the packets and then decode it to understand the values.

- Identification of anomalies, e.g. in the environmental monitoring case (increase in CO, particulate matter levels etc.).

Detection of certain attributes also is key in triggering more complex processing operation. For instance, consider an audio IoT sensors deployed at scale in wild. These are deployed to detect presence of certain species of birds or animals [1]. Rather than capturing the sound and processing it continuously, often in the IoT systems (typically having multiple cores of MCUs), its customary to detect the sound first and then trigger the complex classification pipeline. This saves the unnecessary usage of battery on the sensors, increasing the operational life-time for the deployment.

A detection problem can be casted as a simple hypothesis testing problem. Under each hypothesis, the desired attribute follows a completely known distribution. The classical approach for the detection theory are based on Neyman-Pearson theorem and a Bayesian approach is based on Bayesian Risk Minimisation. When the distribution of the signal is not exactly known then energy detection can be employed. We will only explore these aspects alongside some representative use-cases where these will be employed in IoT deployments.

Neyman-Pearson Criterion

To appreciate Neyman-Pearson criterion, let us start with a very simple example of binary hypothesis testing. In other words, let us assume that we observe a single realisation (sample/instance) of a random variable whose PDF is either N(0,1) or N(1,1). Essentially, the hypothesis testing problem is to determine from single sample say x[0]∼N(μ,1) whether μ=1 or μ=0, i.e. which distribution it belongs to. Mathematically,

H0H1:μ=0 or x[0]=w[0],:μ=1 or x[0]=s[0]+w[0],w[0]∼N(0,1) and s[0]=1,where H0 is referred to as the null hypothesis and H1 as the alternate hypothesis. The PDF under both hypothesis is shown below, with the difference in μ accounting for the shift to the right under H1. As you can see from the single sample it is difficult to detect which one of the distributions it belongs to specially given the overlap between them. The decision can be made on basis of fixed threshold. The threshold line is indicated by dashed black line. Drag the slider at the bottom of the figure to fix the threshold to x[0]=0.5.

This presents one possible approach, i.e., if x[0]>21 then its more likely for x[0]∈H1, therefore hypothesis H1 is true. Alternatively, we can say if x[0]>21 then p(x[0]∣H1)>p(x[0]∣H0). So the detection model is simply comparing the observed x[0] with a threshold, i.e., say γ=21. With this approach we can make two type of errors:

- If we decide H1 instead of H0, we make Type I error. The probability of making Type I error, i.e., say P(H1∣H0) is known as Probability of False Alarm and is denoted by PFA.

- If we decide H0 instead of H1, we make Type II error. The probability of making Type II error, i.e., say P(H0∣H1) is known as Probability of Missed Detection and is denoted by PMD. The probability of missed detection is PMD=1−PD=1−P(H1∣H1).

As you will notice from the figure above, it is not possible to reduce both error probabilities simultaneously. Reducing one increases the other, i.e., there is inherent trade-off between PFA and PMD. Consequently, a sensible approach to designing optimal detector is to hold one of these probabilities constant while minimising the other. For instance, fix PFA=ϵ, with ϵ being small constant while minimising the PMD.

Definition

The approach of selecting optimal detector which minimises the probability of missed-detection or equally maximises the probability of detection, while keeping the probability of the false-alarm constant is known as Neyman-Pearson theorem. In other words, The Neyman–Pearson test chooses the threshold γ such that the false alarm probability is exactly ϵ. This ensures that among all tests with false alarm probability ϵ, the likelihood ratio test achieves the highest probability of detection. In other words, to maximise the PD for given PFA=ϵ deicide H1 if:

L(x)=p(x∣H0)p(x∣H1)>γ,where, x∈RN is the observation vector, the threshold γ is found from

PFA=∫x:L(x)>γp(x∣H0)dx=ϵ.What the formula says is given L(x) is the likelihood ratio:

- If it’s larger than the threshold γ, we decide H1 (signal present).

- Otherwise, we decide H0 (signal absent).

The threshold is not arbitrary—it is chosen so that the probability of false alarm equals a predefined level ϵ. Lastly, the

PFA=∫{x:L(x)>γ}p(x∣H0)dx=ϵThis integral means: we add up the probability mass of all outcomes x that would make us mistakenly decide H1, when H0 is in fact true. That probability must equal ϵ, which is a design parameter (for example, (ϵ=10−3)).

Binary Hypothesis test

For the hypothesis test of the problem we introduced above, we can easily find the NP test. Assume that we require PFA=10−3. Then, from L(x) we decide H1 if

p(x∣H0)p(x∣H1)=2π1exp[−21x2[0]]2π1exp[−21(x[0]−1)2]>γor

exp[−21(x2[0]−2x[0]+1−x2[0])]>γor finally

exp(x[0]−21)>γ.At this point we could determine γ from the false alarm constraint

PFA=P{exp(x[0]−21)>γH0}=10−3.A simpler approach is to take logarithms (since log is monotonic). So we decide H1 if

x[0]>lnγ+21.Letting γ′=lnγ+21, we decide H1 if x[0]>γ′. To explicitly find γ′ we use the PFA constraint:

PFA=P{x[0]>γ′∣H0}=∫γ′∞2π1exp[−21x2]dx=10−3.Thus γ′=3. The NP test is to decide H1 if x[0]>3. The detection probability is then

PD=P{x[0]>3∣H1}=∫3∞2π1exp[−21(x−1)2]dx=0.023.If instead we require PFA=0.5, then the threshold is found from

0.5=∫γ′∞2π1exp[−21x2]dxwhich gives γ′=0. Then

PD=∫0∞2π1exp[−21(x−1)2]dx=∫0∞2π1exp[−21(x2−2x+1)]dx.=1−Q(1)=0.84.This essentially shows that you can trade the higher PD for tolerating higher PFA.

General Problem Setup for Detection of DC Buried in Noise

Consider the binary hypothesis testing problem:

H0:H1:x[n]=w[n](noise only)x[n]=A+w[n](signal + noise)where:

- x[n] is the observed signal at time n

- A is a known constant amplitude

- w[n]∼N(0,σ2) is additive white Gaussian noise

For N observations x=[x[0],x[1],…,x[N−1]]T, the hypotheses become:

H0:H1:x=wx=A1+wwhere 1=[1,1,…,1]T and w∼N(0,σ2I).

Likelihood Functions

Under each hypothesis, the likelihood functions are:

p(x∣H0)p(x∣H1)=(2πσ2)N/21exp(−2σ21n=0∑N−1x[n]2)=(2πσ2)N/21exp(−2σ21n=0∑N−1(x[n]−A)2)Likelihood Ratio Test (LRT)

The likelihood ratio is:

L(x)=p(x∣H0)p(x∣H1)=exp(σ2An=0∑N−1x[n]−2σ2NA2)Taking the natural logarithm:

lnL(x)=σ2An=0∑N−1x[n]−2σ2NA2The LRT decision rule is:

lnL(x){>lnγ<lnγdecide H1decide H0This simplifies to the test statistic:

T(x)=n=0∑N−1x[n]{>γ′<γ′decide H1decide H0where γ′=Aσ2lnγ+2NA.

Neyman-Pearson Criterion

The Neyman-Pearson lemma states that for a given probability of false alarm PFA=α, the likelihood ratio test maximizes the probability of detection PD (or equivalently, minimizes the probability of missed detection).

For our problem:

- Under H0: T(x)∼N(0,Nσ2)

- Under H1: T(x)∼N(NA,Nσ2)

The probability of false alarm is:

PFA=P(T>γ′∣H0)=Q(Nσγ′)Nσγ′=Q−1(PFA)The probability of detection is:

PD=P(T>γ′∣H1)=Q(Nσγ′−NA)=Q(Q−1(PFA)−σNA)where Q(⋅) is the Q-function (complement of the standard normal CDF). The value ENR=Nσ2A2 is often known as Energy-to-noise-ratio (can be expresed in dB scale as 10log10(ENR)). The figure below studies impact of varying ENR on PD for various PFA's.

Receiver Operating Curve (ROC)

A Receiver Operating Characteristic (ROC) curve is a graphical tool used to evaluate the performance of a binary classifier system by illustrating the trade-off between PD and PFA across different decision thresholds. Each point on the curve corresponds to a particular threshold, showing how increasing sensitivity (detecting more true positives) often comes at the cost of increased false alarms. The ROC curve provides a comprehensive view of a system’s discriminative ability, with curves closer to the top-left corner indicating better performance. The diagonal line PD=PFA represents random guessing.

Generalization: Bayes Risk

The Neyman-Pearson approach fixes PFA and maximizes PD. A more general approach is to minimize the Bayes risk, which considers the costs of different decision outcomes and prior probabilities.

Bayes Risk Formulation

Let:

- Cij = cost of deciding Hi when Hj is true

- P(H0), P(H1) = prior probabilities

The average risk is:

R=C00P(H0)P(decide H0∣H0)+C01P(H0)P(decide H1∣H0)+C10P(H1)P(decide H0∣H1)+C11P(H1)P(decide H1∣H1)Typically, we assume C00=C11=0 (correct decisions have no cost), so:

R=C01P(H0)PFA+C10P(H1)PMDwhere PMD=1−PD is the probability of missed detection.

Bayes Decision Rule

The Bayes optimal decision rule minimizes the expected risk:

p(x∣H0)p(x∣H1){>C10P(H1)C01P(H0)<C10P(H1)C01P(H0)decide H1decide H0This shows that the optimal threshold depends on:

- The cost ratio C01/C10

- The prior probability ratio P(H0)/P(H1)

Special Cases

Equal costs and priors: C01=C10, P(H0)=P(H1)=0.5 Threshold = 1 (decide based on which hypothesis is more likely)

Minimax criterion: Choose the threshold to minimize the maximum possible risk

Neyman-Pearson: Equivalent to Bayes with specific cost assignments

The Bayes framework provides a unified approach that encompasses the Neyman-Pearson criterion as a special case, while allowing for incorporation of prior knowledge and decision costs.

References

[1] Pringle, S., Dallimer, M., Goddard, M.A. et al. Opportunities and challenges for monitoring terrestrial biodiversity in the robotics age. Nat Ecol Evol 9, 1031–1042 (2025). https://doi.org/10.1038/s41559-025-02704-9

[2] Kay, Steven M. Fundamentals of statistical signal processing: Detection theory. Prentice-Hall, Inc., 1993.