Lecture 6-Part 2

Contents

- Introduction

- 1. Double-Diamond Design Methodology

- 2. Design Thinking (Stanford d.school Model)

- 3. Biz-ML Methodology (Business-Driven ML)

- 4. Systems Thinking

- 5. Design Swigle (Agile Design–Engineering Hybrid)

- Integrated Framework

- The Architecture

- Deployment & Containerization

- Monitoring & Feedback Loops

- References

Introduction

The goal of this part is to show how various design methodologies can be applied to Edge AI and IoT, including:

- Its philosophy and process.

- How it maps to the ML lifecycle Techniques used in each phase (e.g., persona discovery, system mapping)

- Concrete examples of adopting these design frameworks and methodological thinking.

- Strengths and challenges in real AI/IoT projects.

When designing an Edge AI–IoT solution, the Big Picture phase is where you decide what to build, why it matters, and how it fits into a real ecosystem. This stage bridges human-centered design, data-driven reasoning, and systems engineering.

Below are five complementary methodologies that can guide this phase — each explored in depth and grounded in Edge AI and IoT realities.

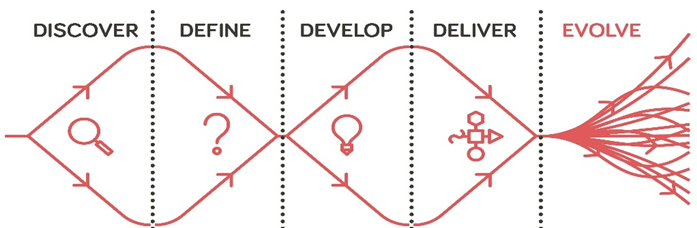

1. Double-Diamond Design Methodology

Double Diamond Design framework

Philosophy:

Developed by the British Design Council [1]., the Double Diamond emphasizes divergent and convergent thinking. Essentially, it advocates exploring the problem broadly before narrowing down to focused, validated solutions.

Phases Applied to Edge AI & IoT

1. Discover — Diverge on Understanding

Goal: The goal of discovery phase is to understand the users, devices, services, organisational process for service delivery and operational environment. One can approach this phase through a step-by-step methodology. For instance, as an IoT architect you can

- Conduct ethnographic studies, site visits, and sensor audits to understand the users, devices and envirnoment before crafting the detailed technical requirement specification.

- Identify friction points: e.g., latency in data transfer, energy constraints on devices, unreliable connectivity.

- Collect human stories that show where edge intelligence could add value.

Example:

Note

In an industrial IoT setup, maintenance engineers describe the difficulty of detecting bearing wear before catastrophic failure. Sensors exist, but the alert system is cloud-dependent and often delayed.

2. Define — Converge to a Clear Problem

Once we understand the problem, we need to translate qualitative findings into design insights. As an IoT architect you can begin by articulating a “How Might We” statement, e.g.,

“How might we enable real-time fault detection for rotating machinery in low-connectivity environments?”

These design insights need to be linked with a quantitative measurable success criteria (e.g., reduce downtime by 25% by using local ML deployment). This should also lead to subsequent questions, e.g. what is cost of attaining this, what value does it add, is there an return-on-investment associated with both capital and operational expenses which will be incurred to support deployment.

3. Develop — Diverge with Ideas

Once the technical requirement specification is ready, brainstorm with cross-functional teams (AI engineers, designers, hardware specialists). This allows exploring design alternatives — on-device inference, compressed models, federated learning. Sketch the architecture options balancing performance, power, and connectivity.

4. Deliver — Converge by Prototyping

There is nothing better than building and re-iterating with users. Mockups, A/B tests, Red/Green tests are all useful tools for iterating over the design. As an IoT architect one should build minimal viable prototypes, e.g., lightweight ML models on Raspberry Pi or Jetson Nano often paired with minimal local UI. This can then be validated with the actual users (e.g., technicians, doctors, manufacturer etc.). This should allow you to capture feedback on usability, latency, and interpretability. Allowing you to further refine the design.

Strengths of Double-Diamond Framework:

- The framework is structured and visually intutive. This allows easy engagement with the end-user/service providers.

- The framework balances creativity and technical discipline required to meet the end goal.

- The framework is easy to communicate to mixed teams and track the progress.

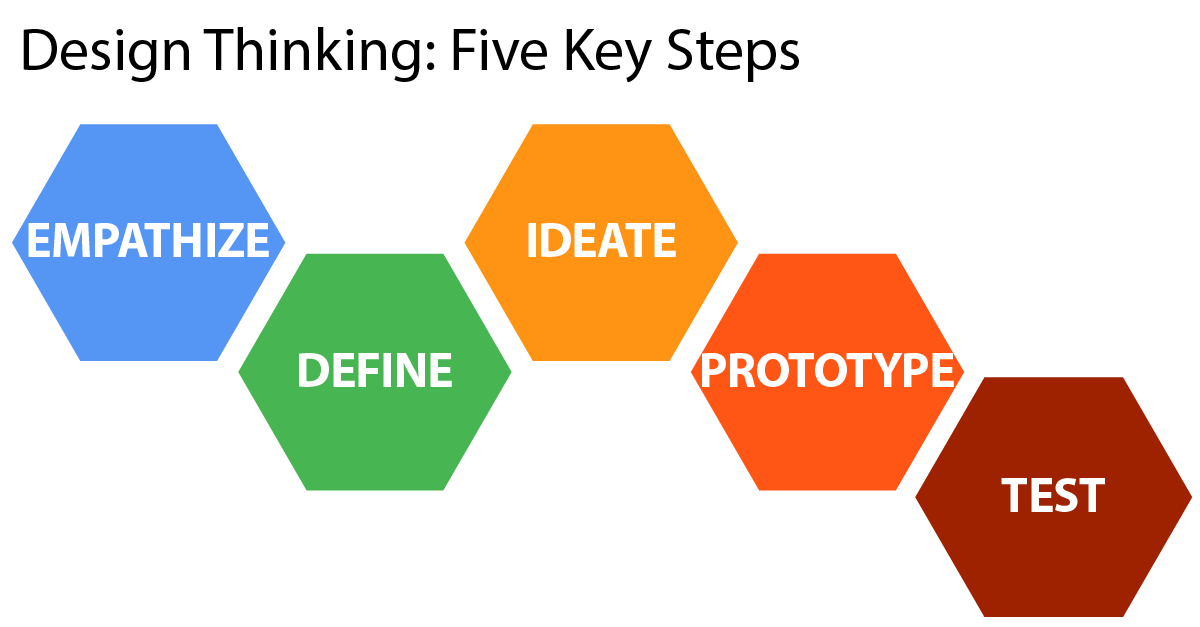

2. Design Thinking (Stanford d.school Model)

Stanford Design Thinking Framework

Philosophy:

Empathy-driven and iterative, focusing on solving the right problem through continuous learning and prototyping.[2].

Phases Applied to Edge AI & IoT

1. Empathize

Observe real contexts of the current service use and discuss the potential transformation. Understand emotional and functional pain points. This should allow mapping interactions between people, devices, and AI outputs.

Note

Example: A nurse expresses frustration with false alarms from patient sensors — a cue for better edge filtering.

2. Define

The core objective of this phase is to arrive at a user-centered problem statements.

Note

Example: “Nurses need reliable alerts that reduce alarm fatigue.”

3. Ideate

During this phase, one can run co-creation workshops with users and engineers. The focus of these could be in exploring “What if?” questions (e.g., What if sensors learned patient baselines locally?).

4. Prototype

The ideation phase should provide solid grounds to build minimal IoT prototypes or mock data streams. This should allow testing of the key assumptions, not full systems.

5. Test

The integrated systems can then be deployed in real environments. The aim should be to gatherboth technical and human feedback.

Strengths:

Deep empathy, encourages innovation.

Challenges:

Less structured for data and ML lifecycle planning.

3. Biz-ML Methodology (Business-Driven ML)

Philosophy:

Biz-ML emphasizes that every ML project should deliver clear business or operational value. It aligns data science, engineering, and business strategy to ensure that ML solutions solve tangible, measurable problems. The model builds on a popular analytics centred approach called CRISP-DM (Cross Industry Standard Process for Data Mining).[3]

The Six Steps of BizML

1. Value

Purpose

Establish why the ML solution exists. Align goals with measurable business outcomes and operational impact.

Edge AI / IoT Example

Predictive maintenance in wind turbines: Reduce downtime by 20% and optimize energy consumption of IoT sensors.

2. Target

Purpose

Define what the model predicts for each case, ensuring relevance to business objectives.

Edge AI / IoT Example

Predict Remaining Useful Life (RUL) of a pump using vibration and temperature readings from edge sensors.

3. Performance

Purpose

Establish KPIs to measure success before model training, including technical and business metrics.

Edge AI / IoT Example

Technical metric: Prediction accuracy ≥ 90%. Business metric: Reduction in unplanned downtime ≥ 20%.

4. Fuel

Purpose

Prepare high-quality data for training, ensuring it reflects real-world operational conditions.

Edge AI / IoT Example

Aggregate and clean historical vibration and temperature data from sensors. Normalize and remove noise for on-device inference.

5. Algorithm

Purpose

Train predictive models aligned with technical constraints and business objectives.

Edge AI / IoT Example

TinyML models or quantized neural networks deployed on microcontrollers or edge GPUs, tuned for latency, accuracy, and energy efficiency.

6. Launch

Purpose

Deploy models into production, integrate into workflows, and maintain performance over time.

Edge AI / IoT Example

Containerized deployment to edge nodes with continuous monitoring of predictions, device health, and business KPIs. OTA updates for retraining.

Mapping BizML Six Steps to Edge AI/IoT Workflow

| BizML Step | Edge AI/IoT Implementation | Example |

|---|---|---|

| Value | Define business impact & operational goals | Reduce downtime by 20% in industrial turbines |

| Target | Specify prediction objective per device | Predict failure of pump based on vibration patterns |

| Performance | Set KPIs linking ML metrics & business outcomes | Accuracy ≥ 90%, downtime reduction ≥ 20% |

| Fuel | Collect & prepare sensor data | Clean and normalize vibration & temperature data |

| Algorithm | Train and optimize models for edge constraints | TinyML or quantized neural networks for on-device inference |

| Launch | Deploy, integrate into workflows, monitor KPIs | Containerized edge deployment with OTA updates |

Example Workflow: Edge AI for Smart Logistics

- Value: Reduce delivery delays by 15% and optimize fuel usage.

- Target: Predict real-time ETA for each delivery vehicle.

- Performance: Accuracy ≥ 90% in ETA, fuel savings ≥ 10%.

- Fuel: Aggregate GPS, traffic, and weather data.

- Algorithm: Train regression model for edge deployment on in-vehicle microcontroller.

- Launch: Deploy containerized model, monitor ETA prediction drift, update with OTA updates.

4. Systems Thinking

Philosophy:

Views AI and IoT as interconnected systems — human, digital, and physical — emphasizing feedback loops and interdependencies.[4][5]

Application to Edge AI & IoT

- Map devices, gateways, cloud, and human actors.

- Identify leverage points and feedback loops.

- Integrate human and technical considerations (safety, trust, reliability).

Note

Example: Smart city traffic cameras: dependencies between sensor placement, network latency, and human decision-making.

Strengths:

Excellent for multi-stakeholder, complex IoT systems.

Challenges:

Abstract; requires facilitation expertise.

5. Design Swigle (Agile Design–Engineering Hybrid)

##Philosophy:

Combines human-centered discovery and agile iteration, ideal for continuous improvement in Edge AI systems.

Phases Applied to Edge AI & IoT

- Understand: Rapid persona and context creation.

- Explore: Design sprints and digital twin simulation.

- Build: Minimal functional edge prototypes with telemetry.

- Evaluate: Collect feedback and refine models continuously.

Strengths:

Fast iteration, integrates MLOps feedback loops.

Challenges:

Informal; needs disciplined teams.

Integrated Framework

Phase 1: Human-Centered Discovery

Design Thinking + Double Diamond

Objective: Understand real user problems, context, and constraints.

Methods:

- Persona creation and journey mapping

- Empathic interviews and contextual inquiry

- Divergent–convergent ideation cycles (Double Diamond)

Deliverables: Validated problem statements, measurable goals, and opportunity areas for AI intervention.

Example: In a smart factory, field engineers struggle with connectivity downtime. Edge AI anomaly detection can reduce dependency on cloud updates.

Phase 2: System Context Mapping

Systems Thinking

Objective: Model the interactions among devices, humans, and data.

Methods:

- Causal loop and dependency diagrams

- Trade-off analysis between latency, compute, and energy

- Edge–Cloud continuum planning

Deliverables: Architecture blueprints and system interdependency maps.

Example: For a smart grid, Systems Thinking clarifies that real-time decisions must happen locally, while optimization runs in the cloud.

Phase 3: Data-Driven Development

BizML

Objective: Translate design insights into business-aligned machine learning pipelines that deliver measurable value.

Six Steps of BizML:

- Value: Define deployment goal & business value proposition.

- Target: Establish prediction goal relevant to priorities.

- Performance: Set KPIs and evaluation metrics.

- Fuel: Collect, clean, transform, and format data.

- Algorithm: Train and tune predictive model.

- Launch: Deploy model & monitor performance.

Example: In precision agriculture, on-device regression models predict irrigation needs from soil moisture sensors, ensuring low-latency predictions and optimized edge performance.

Phase 4: Continuous Improvement

Design Swigle + MLOps

Objective: Enable continuous learning and evolution through data and user feedback.

Practices:

- CI/CD pipelines for ML workflows (automated training, testing, deployment)

- Continuous monitoring (model drift, hardware metrics, UX telemetry)

- Short iterative loops for design and retraining

Deliverables:

- Automated retraining pipelines

- Versioned models and datasets (MLflow, DVC)

- Monitoring dashboards (Prometheus, Grafana)

Example: In logistics, seasonal model drift is handled by automated retraining and iterative UI improvements to restore reliability.

Feedback Loops Across All Layers

- User feedback refines the design.

- Telemetry informs architectural tuning.

- Model drift triggers retraining.

- Business KPIs realign objectives.

The Architecture

Understanding of the problem is first step. Once problem is understood, it comes to data and how its processed in ML pipelines.

1. Get the Data

Data is the foundation of your ML system. In IoT and Edge AI systems:

- Sources: Sensors, gateways, embedded devices, edge servers, and cloud APIs.

- Challenges: Heterogeneity, latency, data loss, and synchronization.

- Best Practices:

- Use data versioning (DVC) for traceability.

- Apply data quality checks at the edge.

- Collect metadata about environmental context (temperature, location, time).

- Stream data via MQTT, Kafka, or RESTful APIs.

2. Discover and Visualize the Data

- Use tools such as Pandas, Seaborn, and Power BI to visualize sensor data trends.

- Identify anomalies, gaps, and correlation patterns.

- For edge systems, visualize latency and reliability distributions as well.

3. Prepare the Data for ML Algorithms

- Clean noisy IoT data using filters (Kalman, Savitzky–Golay).

- Normalize sensor readings.

- Feature engineer domain-specific metrics (e.g., vibration RMS for predictive maintenance).

- Apply dimensionality reduction if needed for on-device efficiency (PCA, autoencoders).

4. Select and Train a Model

- Choose models suited to resource-constrained environments:

- TinyML models (Decision Trees, Small CNNs, SVMs)

- Quantized models for edge devices.

- Train on cloud or workstation; deploy optimized version to edge hardware.

- Use transfer learning where labeled data is scarce.

5. Fine-Tune Your Model

- Perform hyperparameter optimization (Optuna, Ray Tune).

- Use cross-validation to ensure robustness across device variations.

- Apply model pruning and quantization for real-time constraints.

6. Present Your Solution

- Combine design insights and performance results in a compelling narrative.

- Show:

- Improved user experience metrics (speed, reliability)

- Technical metrics (latency, model accuracy)

- System metrics (power consumption, network utilization)

- Use visual storytelling (dashboards, videos, prototypes).

7. Launch, Monitor, and Maintain Your System

Deployment & Containerization

Package edge and cloud components in Docker containers for portability. Use Kubernetes or KubeEdge for orchestrating edge–cloud workflows. We will discuss this in length later.

Monitoring & Feedback Loops

- Implement MLOps pipelines with:

- Continuous monitoring (Prometheus, Grafana)

- Automated retraining (Airflow, Jenkins)

- Drift detection and alerting (Evidently AI)

- Continuously integrate user feedback for model and UX improvements.