Lecture 9 - Part 2

Contents

Case Study: Predictive Maintenance at the Edge

1. Scenario Description

Instructions: Read the scenario below carefully. The highlighted terms indicate key technical requirements that map to specific edge computing tools.

Scenario: A global manufacturing company operates 15 automated factories. Each factory contains hundreds of robotic arms that must be monitored for mechanical fatigue and overheating. To prevent costly downtime, the company needs a system that can predict hardware failure in real-time, even when the factory’s internet connection is unstable.

The Challenge: The robotic arms use various proprietary sensors and communication protocols. Additionally, the maintenance algorithms are frequently updated by the data science team and must be deployed across thousands of devices without manual intervention or halting production. The system must be highly reliable, automatically recovering if software services fail.

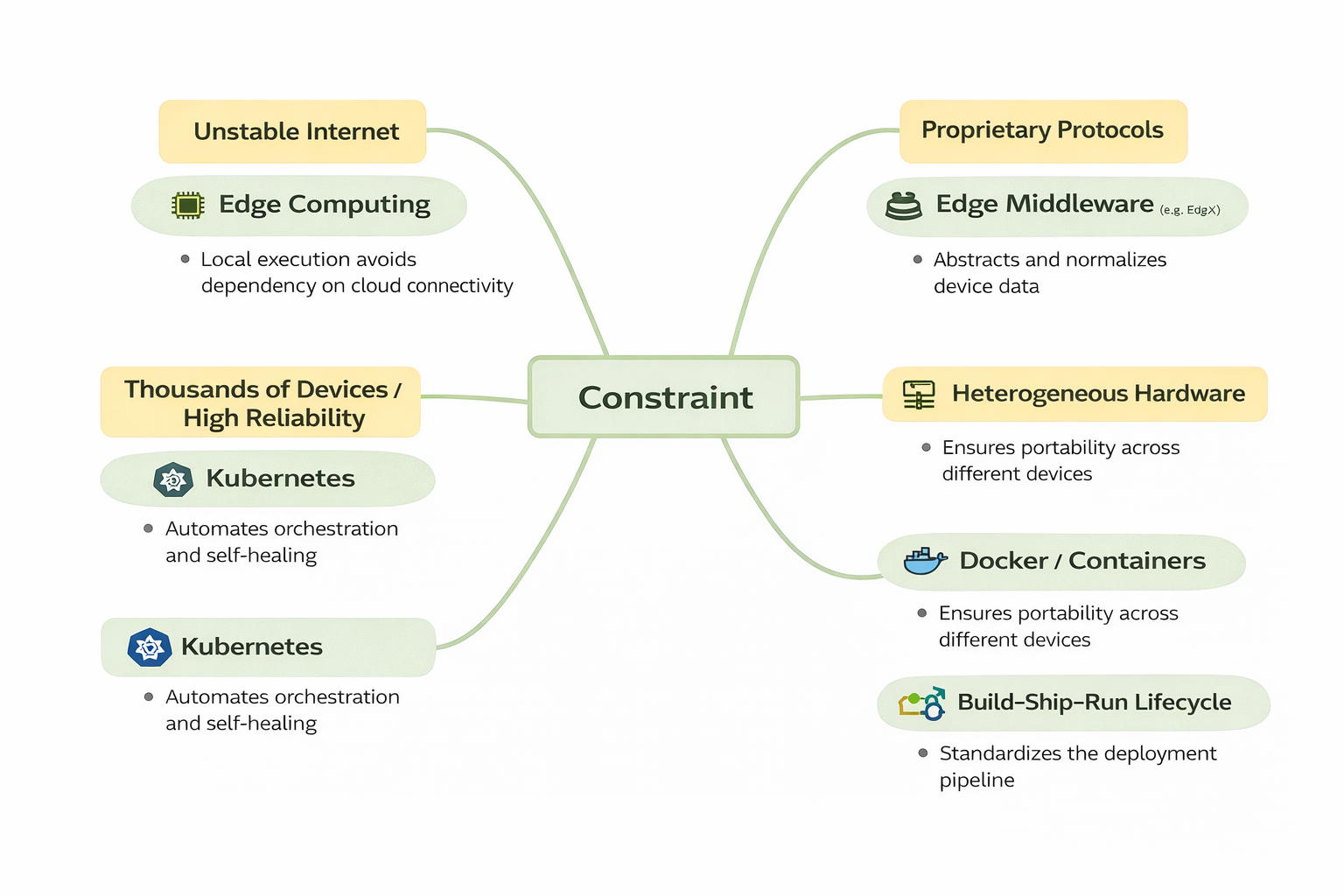

2. Thinking Map: From Constraints to Solutions

Connect the business constraints found in the text to the correct technical solution.

Figure 1:Mindmap of constraints and solutions

- Constraint: Unstable Internet

- Solution: Edge Computing (Local execution avoids dependency on cloud connectivity).

- Constraint: Proprietary Protocols

- Solution: Edge Middleware (e.g., EdgeX) (Abstracts and normalizes device data).

- Constraint: Heterogeneous Hardware

- Solution: Docker/Containers (Ensures portability across different devices).

- Constraint: Thousands of Devices / High Reliability

- Solution: Kubernetes (Automates orchestration and self-healing).

- Constraint: Frequent Updates

- Solution: Build–Ship–Run Lifecycle (Standardizes the deployment pipeline).

3. The Operational Solution

A. Packaging with Docker

Developers use Docker to package maintenance services into container images. This encapsulates the application logic, libraries, and configurations, ensuring the software runs identically in the test lab as it does on the factory floor. Because containers share the host OS kernel, they are lightweight enough for resource-constrained edge gateways.

B. Normalization with Middleware

To handle the "proprietary sensors," EdgeX Foundry (middleware) is deployed. It acts as a translation layer, converting raw sensor data into a standardized format that the analytics microservices can understand.

C. Orchestration with Kubernetes

Because there are "thousands of devices," manual management is impossible. A lightweight Kubernetes variant is used to:

- Maintain Desired State: Ensure the specific number of analytics containers are always running.

- Self-Heal: Automatically restart a container if it crashes.

- Rollout Updates: Deploy new AI models across all 15 factories simultaneously.

D. The Cloud-Edge Collaboration

Feature | Edge Responsibility (Local) | Cloud Responsibility (Central) |

|---|---|---|

Execution | Real-time AI inference and failure alerts. | Long-term data storage and fleet-wide analytics. |

Connectivity | Operates independently during outages. | Provides global visibility and cross-site coordination. |

Development | Feeds logs/metrics back to the cloud. | Re-trains AI models and builds new images. |

4. Discussion Questions & Solutions

Note

Q1: Why can't we just use a traditional "installer" or script to update these 15 factories? Answer: Manual installation does not scale and is difficult to maintain across heterogeneous environments. Containers provide a "standardized box" that ensures reproducibility, while Kubernetes automates the deployment to thousands of devices at once.

Note

Q2: What happens to the "Continuous Evolution" loop if the factory loses internet for two days? Answer: The "Run" phase continues locally at the edge, maintaining safety and operations. However, the "Build" and "Ship" phases are paused; the cloud cannot receive new data to retrain models, and the edge cannot pull updated images until connectivity is restored.